The Empowerment Promise & The Oracle Fiasco

I’m going to make a promise to you right now. If you give me your attention for the next few minutes, you’re going to walk away knowing exactly why ninety percent of the AI co-pilots being built today are a complete and total waste of capital. More importantly, you’ll learn a precise, physics-based method for architecting artificial intelligence that actually moves your bottom line. We’re going to completely dismantle the corporate obsession with slapping chat boxes on broken workflows, and I’ll show you exactly how to use axiom-driven problem mapping to deploy capital effectively. It’s about turning off the hype and turning on the logic.

Right now, the corporate world is absolutely losing its mind. The market is flooded with panic. Every executive team is rushing to build a generative AI assistant because they’re terrified of being left behind. So, they look at their bloated, inefficient operations, and they think a conversational interface will save them. They assume an AI co-pilot will act as a magical band-aid over decades of technical debt and terrible process design. No, it won’t.

We need to establish a baseline rule before we go any further. You cannot automate a broken process, and you definitely should not make it talk back to you.

If your underlying data structure is garbage, and your incentive models are misaligned, giving your employees a chat box just gives them a faster way to execute the wrong job. It’s an accelerator for dysfunction.

To understand exactly how this plays out in the real world, let’s talk about LexiCorp. They’re a massive, mid-stage enterprise, and they recently orchestrated what we’ll call the Two Million Dollar Oracle Co-Pilot Fiasco.

LexiCorp is bleeding cash in the legal department. The corporate lawyers are billing at eight hundred dollars an hour, and they’re spending forty hours a week manually reading and summarizing two-hundred-page vendor contracts. These Master Services Agreements are dense, highly complex documents filled with fifty-million-dollar liability caps and aggressive Service Level Agreement penalties. It’s a brutal, exhausting operational bottleneck.

The VP of Operations at LexiCorp looks at this bottleneck, and he panics. He calls in the enterprise software reps. The pitch is beautiful. The Oracle vendors promise to build a custom, generative AI co-pilot tailored specifically for the legal team. They claim the AI will ingest those massive contracts, parse the legalese, and instantly generate a clean, five-point bulleted summary of the core risks.

The executives at LexiCorp are thrilled. They write a two million dollar check without blinking. They’re entirely convinced they’ve just solved their margin problem.

Six months later, they launch the tool. The leadership team is sitting in the boardroom, staring at the analytics dashboard, waiting for the efficiency metrics to skyrocket. They’re expecting to see legal review times drop by eighty percent.

Thirty days post-launch, the daily active user count is zero. It flatlined. The lawyers aren’t using the co-pilot. They’re completely ignoring it, and the legal review bottleneck is just as bad as it was before the two million dollar investment.

Why? Because the leadership team committed the ultimate sin of innovation. They engaged in solution-jumping. They built a brilliant technological solution for the completely wrong problem.

The executives at LexiCorp thought the “job” was “reading contracts faster.” They’re completely wrong. That isn’t a job. That’s an analogy. They looked at the surface-level symptom—lawyers staring at paper—and assumed reading was the objective.

When we strip this problem down to its atomic truths, the reality looks very different. The undeniable, physical, and economic axiom at the core of the existence of a corporate lawyer isn’t reading. The axiom is the quantification and transfer of financial liability.

A corporate lawyer doesn’t read just to consume words. They’re hunting for systemic risk. They’re looking for the hidden trapdoor in paragraph forty-two that will cost the company fifty million dollars in a breach of contract scenario.

When the shiny new AI co-pilot spit out a clean, conversational summary of the contract, the lawyer couldn’t trust it. The personal law license of the lawyer is on the line. Their career is on the line. The company is at immense financial risk. If the AI hallucinates a single word, or if it misses a subtle, deeply buried indemnity clause, the lawyer is the one getting fired. The chat box doesn’t take the blame; the human does.

So, what did the lawyers actually do? They read the entire two-hundred-page contract anyway to verify that the AI summary was accurate. The co-pilot didn’t eliminate the friction; it just added a highly expensive, redundant step to an already bloated workflow. LexiCorp paid two million dollars to give their lawyers an extra chore.

This is the catastrophic danger of the “Near Miss.” A context-aware search bar or a conversational summary tool feels like innovation. It looks incredible in a PowerPoint deck. But if you don’t understand the axiomatic truth of the job being executed, you’re just building a toy.

We must explicitly enforce this philosophy: We’re testing a hypothesis. We aren’t exploring for a problem.

LexiCorp didn’t isolate the friction. They didn’t validate the actual pain points of the lawyer. They just saw a new technology and explored for a way to use it. They built a solution looking for a problem, and the market rejected it instantly.

If you want to build intelligence that actually scales, you have to stop exploring. You have to start deconstructing the physics of the work. You have to locate the exact, undeniable axiom of the job, and you build the automation to solve that specific truth. Everything else is just expensive noise.

The “Near Miss” of Conversational Interfaces

The human brain learns best through contrast. If I want to teach you what a brilliant, structurally sound AI deployment looks like, I can’t just show you a successful product. I have to show you exactly what almost looks right, but ultimately ends in catastrophic failure. You have to see the mirage before you can understand the architecture.

We call this the “Near Miss.” And in the modern enterprise, the ultimate Near Miss is the conversational interface. It’s the context-aware search bar. It’s the friendly little chatbot sitting in the bottom right corner of your SaaS dashboard, waiting to answer your questions.

The enterprise software vendor is going to tell you that this chatbot will revolutionize your workflow. They’ll say it’s going to save your team thousands of hours. It looks incredibly futuristic in a demo. But the vendor is selling you an illusion. They’re selling you the illusion of speed, and they’re completely ignoring the physics of the actual work.

Let’s go back and look at the disaster at LexiCorp. The executives fell perfectly into the Near Miss trap. They looked at the legal department, and they saw highly paid lawyers moving very slowly through massive vendor contracts. They observed this friction, and they immediately engaged in solution-jumping. They assumed that if they could just make the reading process faster, the margin problem would disappear.

So, they bought the two million dollar generative AI co-pilot. They gave the lawyers a chat interface that could instantly summarize a two-hundred-page document.

It feels like innovation, doesn’t it? It feels like you’re leveraging cutting-edge technology to accelerate your team. But you aren’t. You’re just masking a systemic failure.

Think about the underlying mechanics of what LexiCorp actually did. They didn’t change the incentive structure of the legal department. They didn’t alter the way financial liability is captured or transferred. They left the entirely bloated, manual, archaic contract review process perfectly intact. They just added a chatbot on top of it.

If you automate a fundamentally broken process, you haven’t created value. You’ve just built an accelerator for dysfunction.

When you give an employee a faster way to execute the wrong job, you’re actively destroying capital. If your underlying data structure is a mess, and your organizational incentives are misaligned, a co-pilot will simply help your team execute those misaligned behaviors with terrifying velocity.

At LexiCorp, the AI co-pilot spit out beautiful, bulleted summaries. But because the lawyers were personally on the hook for any missed liabilities, they couldn’t trust the AI. The foundational axiom of the job—the rigorous mitigation of financial risk—was completely ignored by the software developers. The developers thought the job was “summarizing text.” They missed the atomic truth of the workflow entirely.

Because the executives jumped straight to a solution without isolating and validating the actual friction, the entire project collapsed. The lawyers went right back to reading the contracts manually, and the two million dollar software became an expensive paperweight.

This is why we must adopt a radical shift in how we think about technology deployments. We have to kill the exploration mindset.

You have to stop sending your product managers and strategists on vague “listening tours” to figure out where they can inject artificial intelligence into the business. You have to stop holding brainstorming sessions where teams sit in a room and guess what features the user might want. Brainstorming based on existing market conditions just guarantees incrementalism. It guarantees you’ll build another Near Miss.

Instead, we must explicitly enforce this philosophy: We’re testing a hypothesis. We aren’t exploring for a problem.

When you explore, you wander blindly. You end up building chat boxes because they look cool. But when you test a hypothesis, you are operating with targeted efficiency. You isolate a highly specific point of friction first. You validate it conceptually. And then you focus ONLY on the measures that actually matter to that specific, validated friction.

If LexiCorp had stopped exploring and started testing hypotheses, they would’ve realized immediately that “reading speed” was not the constraint. They would’ve realized that the conversational interface was a distraction.

They needed a deterministic, physics-based toolkit to strip the problem down to its core. They needed to stop looking at the software, and start looking at the undeniable axioms of the job itself. If you don’t map the job from the atomic level up, you’ll always build the wrong thing. You’ll build a shiny co-pilot that no one actually needs.

The Hypothesis Creed & Targeted Efficiency

Let’s talk about how corporate research teams actually operate in the real world. It’s usually a total disaster.

When a massive enterprise decides it wants to “do AI,” the leadership team allocates a massive budget. The innovation team takes that budget, and they immediately launch an open-ended “listening tour.” They hire an expensive design agency, and they literally wander around the enterprise, hoping to stumble over a good idea.

The researchers at the agency will pull employees into conference rooms and ask them ridiculous questions. They’ll ask, “Tell me about a time you felt frustrated at work today,” or “Where do you think we could use artificial intelligence in your department?”

This is the absolute height of corporate absurdity. You’re asking tired, overworked employees to invent your business strategy. You’re asking people who are drowning in daily tasks to architect complex technological solutions. It guarantees that you’ll end up building something useless.

What happens during these listening tours? The employees complain about the coffee machine. They complain about the slow intranet. They complain about the fact that they have to click three times to open a specific contract folder.

The design agency takes all of this noise, puts it on a beautiful journey map filled with smiley faces and frowny faces, and presents it to the board. The board is looking at the frowny face next to the “opening contracts” step, and they declare, “We need an AI co-pilot to read and summarize these contracts!”

This entire process is an expensive hallucination. It’s a blind exploration for a problem, and it guarantees that you’ll build a Near Miss.

We have to eradicate this behavior. We must explicitly weave this exact philosophy into our corporate DNA: We’re testing a hypothesis. We aren’t exploring for a problem.

If you explore for a problem, you’ll find a million tiny, irrelevant complaints. But if you test a hypothesis, you’re operating with targeted efficiency.

Targeted efficiency means you don’t spend three months and half a million dollars doing ethnographic research and tracking employee feelings. You isolate a single, massive point of economic friction first.

In our LexiCorp example, the economic friction is obvious. Legal review is costing eight hundred dollars an hour and it’s bottlenecking the entire global sales cycle. That is the friction. You don’t need a listening tour to find it. It’s bleeding out on the balance sheet.

Once we isolate that friction, we validate it against reality. We don’t care how the lawyer feels about the software interface. We care about the mechanical execution of the work. We design a strict hypothesis about what is causing the bottleneck, and we execute ONLY against the parameters that actually matter to that specific friction.

This method creates a dramatic decrease in research costs. You’re no longer boiling the ocean. You’re bringing a magnifying glass to a very specific, highly combustible piece of kindling. You isolate the friction, validate the hypothesis, and ignore the noise.

But how do we actually form that hypothesis? How do we figure out what the lawyer is truly trying to accomplish so we can build the right automation? That brings us to the absolute core of our methodology.

Axiom-Driven Job Mapping

I’m going to murder the concept of “product-centric” journey mapping right now.

If your customer journey map includes the name of a software application, you’ve already failed. If your journey map includes actions like “logging in,” “clicking a button,” “navigating to the dashboard,” or “exporting a file,” you aren’t mapping a job. You’re mapping the limitations of your current technology.

A product-centric map is dangerous because it forces you to think about how to make the current software slightly better. It leads directly to the AI co-pilot trap. You look at the map and you think, “The user is spending too much time clicking these buttons. Let’s give them a voice command to click the buttons for them.”

You’re just paving over a cow path. You’re taking a broken, manual chore and putting a shiny AI wrapper on it.

We have to rebuild our strategy from the physics up. We must rebuild our understanding of the work using cold, hard axioms. We call this Axiom-Driven Job Mapping.

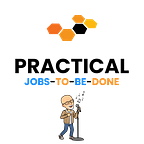

To do this, we use the First Principles Drill. We strip the problem down to atomic truths. What’s an atomic truth? It’s the bedrock reality of why a human is being paid to do something. It has absolutely nothing to do with the software they use.

Let’s return to the corporate lawyers at LexiCorp. If you asked them what their job is, they might say, “I review contracts.” But we know that is just the physical action they’re taking. We use the First Principles Drill to get to the truth.

Why do they review contracts? To find bad clauses. Why do they need to find bad clauses? To prevent the company from being sued. Why does the company care about being sued? Because massive lawsuits threaten the financial survival of the firm.

So, what’s the undeniable, physical, and economic axiom of a corporate lawyer reviewing a Master Services Agreement?

The axiom is: The quantification and transfer of financial liability.

That’s the bedrock. You can’t argue with it. If the lawyer fails to execute that specific transfer of liability, the company loses millions. Every single phase of the job must support and build upon that fundamental, undeniable truth.

Once we have our axiom, we map the job around it. We don’t map the software. We use a strict, universal nine-step chronological structure to map the human struggle: Define, Locate, Prepare, Confirm, Execute, Monitor, Resolve, Modify, Conclude.

Let’s apply this nine-step map to the true axiomatic job at LexiCorp. Let’s look at what the lawyer is actually doing when they’re staring at that two-hundred-page document.

Step 1: Define. The lawyer must define the acceptable parameters of risk for this specific vendor category before they even look at the paper.

Step 2: Locate. They must locate the specific indemnity clauses and penalty triggers buried within a massive, unstructured document.

Step 3: Prepare. They must prepare the counter-arguments and alternative clauses to mitigate the risks they just located.

Step 4: Confirm. They must confirm that the proposed changes align perfectly with the corporate risk playbook of the company.

Step 5: Execute. This is the apex of the job. They must neutralize the quantified financial liability through a verifiable transfer of value (the redlined agreement).

Step 6: Monitor. They must monitor the negotiation pushback from the opposing counsel.

Step 7: Resolve. They must resolve any specific impasses regarding liability caps.

Step 8: Modify. They must modify the final language based on the resolution.

Step 9: Conclude. They must conclude the transfer of liability by finalizing the legal execution of the document.

Look incredibly closely at those nine steps. Do you see the word “read”? Do you see the word “summarize”?

You don’t.

Because reading and summarizing are just archaic, analog methods of locating and executing. They aren’t the job itself.

When the software vendor sold LexiCorp the two million dollar AI co-pilot, they were selling a tool that only vaguely touched Step 2 (Locate). The chatbot located the information and summarized it.

But it did absolutely nothing to help the lawyer Confirm (Step 4) or Execute (Step 5) the actual transfer of liability. In fact, because the chatbot was a black box that hallucinated frequently, the lawyer couldn’t even trust the “Locate” step. They had to go back and read the entire document manually just to be safe.

Axiom-driven job mapping forces you to see the entire battlefield. It forces you to stop looking at the symptoms and start looking at the physics. It forces you to realize that if your AI does not mechanically execute the atomic truth of the job, it’s completely useless.

If you just give an employee a chatbot to summarize a document, you haven’t solved their problem. You’ve abandoned them at Step 2. You’ve left the actual, critical execution step entirely on the shoulders of the human.

This is the power of targeted efficiency and axiomatic mapping. We don’t explore for feelings. We isolate the friction, we define the atomic truth of the work, and we map the execution chronologically. In the next section, I’ll show you exactly how we validate this map to guarantee that the AI we build will actually be adopted by the market.

Validating the Friction

We’ve mapped the nine steps of the job. We’ve stripped away the software interface, and we’re looking at the raw, axiomatic truth of the workflow: Define, Locate, Prepare, Confirm, Execute, Monitor, Resolve, Modify, Conclude.

Now, the executives are staring at the whiteboard. They’re getting excited. They see the map, and they want to throw money at the problem immediately. They want to hire an army of engineers and build a massive, end-to-end AI platform.

Stop right there. That’s a terrible idea.

Just because you’ve mapped the job doesn’t mean you know where to deploy the capital. If you try to write code right now, you’ll fail spectacularly. You have to isolate the exact point of friction, and you have to prove that solving it actually moves the needle.

This is where traditional innovation teams fall into another catastrophic Near Miss. They try to validate the problem by building a Minimum Viable Product. They rush to build a scalable software application. They buy the Oracle co-pilot to test the waters.

I’m going to be brutally honest with you. Building software to test a hypothesis is a massive waste of money. It’s a fundamental misunderstanding of risk.

We don’t write code. We fake the future.

We use a tactic called the Minimum Viable Prototype. Some people call it a Wizard of Oz service. Instead of building a highly complex AI system, you manually fake the exact solution you want to deploy. You use brute human labor to simulate the algorithm. You’re de-risking the logic before you build the factory.

Let’s bring this back to the Oracle co-pilot fiasco at LexiCorp.

The software vendor sold them on automating Step 2: Locate. If the leadership team had used a Minimum Viable Prototype, they would’ve seen the truth immediately.

Before spending two million dollars, they should’ve grabbed three junior paralegals, locked them in a room, and told them to act like the AI. The executives should’ve had the paralegals manually read the contracts, write up a five-point bulleted summary, and hand it to the senior lawyer.

What would’ve happened? The exact same thing. The senior lawyer wouldn’t have trusted the paralegal. They still would’ve read the entire two-hundred-page document to protect their own law license. The friction wouldn’t have disappeared.

The executives would’ve realized that Step 2 was a dead end. But instead of losing two million dollars and six months of engineering time, they would’ve lost four hundred dollars in paralegal wages over a single weekend.

You test manual interventions across the job map until you find the one that actually shifts the unit economics. When you manually fake Step 5—the actual execution and transfer of financial risk—and you watch the bottleneck vanish, then you’ve validated the friction.

When you validate the friction manually, you eliminate risk entirely. You know exactly what the market demands before you spend a single dollar on software engineering. You’re no longer gambling. You’re investing with absolute certainty.

In the next section, I’ll show you exactly how to take this validated data and execute a structural inversion. We’re going to design the true AI solution that LexiCorp should’ve built from the start.

Rebuilding from the Physics Up

We’ve validated the friction. We ran the prototype, and we know with absolute, empirical certainty that Step 5—the actual execution and transfer of financial risk—is the five-alarm fire inside the legal department at LexiCorp.

Now we actually get to build the technology. This is where we separate the amateurs from the architects.

The amateur looks at Step 5, and they try to build a feature. They think, “We’ll just add a ‘draft clause’ button to our AI chatbot.” They want to make the chatbot slightly more helpful. That’s a Near Miss. If you’re forcing a lawyer to copy and paste text from a contract into a separate chat window, type out a prompt, wait for a response, and then paste the result back into the document, you’ve already failed. You’re creating more friction. You’re making the human do the heavy lifting of managing the AI.

We don’t build features. We build structural inversions.

A structural inversion happens when you use technology to completely rip up the unit economics of a business process. We aren’t trying to make the lawyer ten percent faster at typing. We’re executing what we call a Labor Inversion. We want to decouple the revenue or the output of the company from expensive human operational expenditure. We want to shift the fundamental unit of value delivery from an eight-hundred-dollar-an-hour human to a scalable, near-zero-cost AI compute engine.

To do this, you have to realize a profound truth about artificial intelligence in the enterprise: The most powerful AI is completely invisible.

It doesn’t have a cute name. It doesn’t have a greeting animation. It doesn’t ask you how your day is going. A true AI solution operates as a silent orchestration engine in the background.

Let’s rebuild the exact system that LexiCorp should’ve deployed from the very beginning.

Instead of buying a two million dollar conversational co-pilot, LexiCorp should’ve built a background processing engine integrated directly into the email servers and Microsoft Word.

Here is what the workflow of the lawyer should actually look like.

An email arrives from a vendor with a massive, two-hundred-page contract attached. The lawyer doesn’t even know the email has arrived yet. The invisible AI engine intercepts the document instantly. It ingests the text. It cross-references the entire document against the rigid, unbending risk playbook of the company.

The AI locates the toxic indemnity clauses. It prepares the counter-arguments. It confirms the exact fallback language required by the Chief Financial Officer. And then, it executes the redline. The AI goes into the document, strikes out the bad clauses, and inserts the highly specific, legally approved corporate language to neutralize the financial threat.

It does all of this in three seconds, while the lawyer is grabbing a cup of coffee.

When the lawyer finally sits down at the desk, they don’t open a chatbot. They just open Microsoft Word. The contract is already there. The toxic clauses are already highlighted in red. The safe, company-approved fallback clauses are already inserted into the margins.

The system doesn’t ask the lawyer for a prompt. It simply presents the executed work and asks for a verdict. The lawyer reads the redlined clause, uses their highly paid, expert legal judgment, and clicks “Approve.”

Do you see the difference in the physics of this workflow?

With a conversational co-pilot, the human is managing the machine. The human is doing the heavy lifting, the prompting, the checking, and the executing.

With an invisible orchestration engine, the machine manages the heavy lifting. The machine does the locating, the preparing, and the executing. The human is elevated to the only role that actually matters: the final judge of risk.

This is a true Labor Inversion. You’ve taken a forty-hour, brutally manual chore, and you’ve compressed it into a four-hour review session.

The lawyer is no longer hunting for needles in a haystack. They are simply verifying the work of a tireless, invisible machine that perfectly understands the axiomatic truth of the job. The liability is quantified. The risk is transferred. The job is done.

This is how you flip the unit economics of a company. The cost to process a massive vendor agreement plummets. The margins of the company explode. The pipeline velocity of the sales team accelerates because contracts are no longer stuck in legal purgatory for three weeks.

You didn’t achieve this by exploring for a problem. You didn’t achieve this by buying a hyped-up chatbot. You achieved this by deconstructing the problem down to its core physics, validating the exact point of friction with manual prototypes, and deploying a structural inversion to crush that friction entirely.

Artificial intelligence is the most powerful operational lever we have ever seen in the history of business. But if you treat it like a magical toy, it will burn your capital to the ground. You have to stop building co-pilots that talk. You have to start building invisible engines that execute.

The End

Before you clicked on this article, you were likely caught in the exact same trap as everyone else. The enterprise software machine is incredibly loud, and it’s designed to make you panic. Vendors want you to believe that if you don’t buy their generative AI co-pilot today, your business will die tomorrow. They want you to solution-jump.

But you no longer have to operate in a state of panic. You’re completely immune to the Near Miss trap.

When the board demands an AI strategy, you don’t have to throw together a slide deck full of meaningless buzzwords. You don’t have to send your product managers on vague listening tours to ask employees how they feel. You don’t have to run brainstorming sessions to guess what features your market might want.

You now possess a completely deterministic, physics-based toolkit for deploying capital.

You’ve got the First Principles Drill. You know exactly how to strip away the software interface and isolate the undeniable, economic axiom of the work. You know how to find the atomic truth.

You’ve got the Axiom-Driven Job Map. You know that every workflow breaks down into nine strict, chronological steps, and you know how to map those steps without ever referencing a screen, a click, or a button.

You’ve got the Minimum Viable Prototype. You know that building software to test a hypothesis is a catastrophic waste of money. You know how to fake the future manually. You can de-risk the logic and isolate the true friction before you spend a single dollar on engineering.

And finally, you’ve got the Structural Inversion. You know that true artificial intelligence doesn’t talk to you. It’s an invisible orchestration engine. It doesn’t just speed up a broken process; it flips the unit economics of your entire business model. It elevates the human from a manual laborer to a final judge of risk.

The corporate world is going to keep setting money on fire. Your competitors are going to keep buying shiny chatbots that their employees will completely ignore. They’re going to keep masking their operational failures with conversational wrappers.

But you aren’t going to do that. You’ve been handed a weapon against incrementalism. You have the blueprints to actually alter reality.

You aren’t a firefighter chasing symptoms anymore. You’re an architect. You know exactly how to build intelligence that actually executes.

Now, go build it.

Are you interested in innovation, or do your prefer to look busy and just call it innovation. I like to work with people who are serious about the subject and are willing to challenge the current paradigm. Is that you? (my availability is limited)

Book an appointment: https://pjtbd.com/book-mike

Email me: mike@pjtbd.com

Call me: +1 678-824-2789

Join the community: https://pjtbd.com/join

Follow me on 𝕏: https://x.com/mikeboysen

Articles - jtbd.one - De-Risk Your Next Big Idea

Q: Does your innovation advisor provide a 6-figure pre-analysis before delivering the 6-figure proposal?