The Empowerment Promise & The Decade of Lost Bandwidth

Listen closely, because I’m going to show you exactly why the tech industry just burned ten years trying to make customer support bots sound like empathetic humans. More importantly, I’m going to give you the exact framework to stop bolting generative AI onto your “Contact Us” page and start architecting silent, automated engines that actually fix the broken pipes in your business.

If you’re sitting there looking at the AI roadmap of your company and feeling a creeping sense of dread that you’re just building a very polite wall between your brand and your furious customers, you’re not alone. You’re experiencing the symptoms of a systemic, industry-wide failure. But by the time you finish reading this guide, you won’t just understand why your “ticket deflection” strategies feel like they’re spinning their wheels. You’re going to possess the exact cognitive tools to look at any support initiative, instantly strip away the conversational noise, and deploy a computational bulldozer that obliterates the need for customer support entirely. We aren’t going to talk about building digital buddies today. We’re going to talk about manipulating root causes and physics.

To understand how to fix the future, we’ve got to understand how we completely derailed the past. For the last ten years, the customer experience world has suffered from a profound case of cognitive dissonance. We treated customer support as a communication problem rather than an operational failure.

Think about the sheer volume of mental bandwidth we’ve wasted. We became obsessed with “deflection.” From the early days of rigid decision-tree bots to the current tidal wave of generative AI chat widgets, the ultimate goal always seemed to be making the machine sound like a deeply empathetic agent. The logic seemed sound on the surface: customers are reaching out to talk, so naturally, we should give them an artificial intelligence to talk to.

But a customer support ticket isn’t a conversation. It’s a symptom. It’s the mathematical, physical result of a failure in your supply chain, your billing software, or your product quality.

When we force an angry customer to sit down and type a prompt into a text box to figure out where their missing refund is, we haven’t actually solved the problem. We’ve just changed the interface of their struggle. We confused the interface (chat) with the outcome (resolution).

Imagine you buy a brand new television, and it explodes the second you plug it into the wall. You walk back into the store carrying the charred plastic. But instead of taking the TV and handing you a refund, the manager just stands there and recites a highly articulate, flawless poem about the store return policy. The grammar is perfect. The empathy is palpable. But you still don’t have your money.

That’s exactly what a generative AI support bot is doing on your website. It’s a half-million-dollar parrot apologizing for a broken factory.

I’ve always said, it’s isn’t your customer service, it’s the service itself.

The core argument here is simple but abrasive: we wanted a polite shield, but we desperately needed a bulldozer. A conversational interface fundamentally relies on the customer to act as the diagnostic investigator. The customer has to realize they have a problem, navigate to your website, find the chat bubble, type the prompt, wait for the AI to retrieve a knowledge base article, read it, and then somehow execute the fix themselves. That isn’t eliminating friction. That’s outsourcing your operational debt to the buyer. It’s exhausting, and it’s why customer satisfaction scores for pure chat wrappers are notoriously abysmal.

This brings us to a massive, uncomfortable truth about innovation. Innovation rarely fails because we lack engineering talent. It fails because we build brilliant solutions for the wrong problems. The modern enterprise is addicted to “solution-jumping.” When executives see a powerful new technology like a Large Language Model, their immediate instinct is to ask, “How do we put this on the support site to answer FAQs?”

They treat the surface-level symptom as the root cause. They assume the problem is that their customers don’t have enough “access to information.” But information isn’t execution. You can have a chatbot that instantly retrieves every single refund policy in your entire corporate history, but if it doesn’t structurally alter the unit economics of how you resolve failures, you’ve just built a very expensive encyclopedia.

We need a completely new mental model. We need a rallying cry to snap us out of this conversational trance. If you want ideas to stick in the corporate world, they must be salient, they must be surprising, and they must carry a symbol.

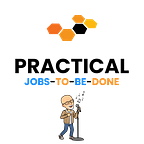

So, here is your new slogan: Kill the Chatbot, Free the Axiom.

What does that actually mean? It means we need to stop starting our innovation pipelines by looking at the support interface. We need to start by deconstructing the customer journey all the way down to its undeniable, foundational truths—its axioms. An axiom isn’t a hunch. It isn’t an industry analogy. An axiom is a fundamental physical, chemical, or mathematical truth that can’t be argued with.

When you kill the chatbot, you stop asking, “How can we help the user talk to us about their broken product?”

When you free the axiom, you start asking, “What is the absolute theoretical minimum cost and time required to execute this repair or refund if we removed the human from the loop entirely?”

We’re going to move away from the “Monolithic Fallacy” where teams waste six months building a conversational minimum viable product before they’ve even mathematically validated the underlying struggle. We’re going to apply the ruthless efficiency of First Principles thinking. We’re going to look at the exact Job-to-be-Done, strip away the analogical reasoning that has poisoned traditional customer experience, and we’re going to engineer a resolution moat that your competitors can’t touch.

You’re about to see this play out in the real world. In the next section, we aren’t going to look at a theoretical software company. We’re going to dive into the messy, high-stakes trenches of global e-commerce. We’re going to look at a company that spent millions trying to build the ultimate digital support agent, only to realize that the entire premise was fundamentally flawed.

Get ready, because we’re going to deconstruct the “Near Miss” of Aura Retail, and it’s going to change the way you look at customer support forever.

The Near Miss (The Aura Retail Case Study)

I want you to picture the global call center matrix for Aura Retail. They’re a massive direct-to-consumer apparel brand moving millions of packages a month. If you’ve never stood in a customer experience command center during the holiday season, you need to understand that the environment is absolute, unrelenting chaos.

The support agents are staring at overwhelming queues. They’re tracking delayed shipments caught in winter storms. They’re managing furious customers demanding refunds for items that arrived damaged. If a warehouse barcode scanner goes down, it creates a massive ripple effect that spawns ten thousand support tickets in a single afternoon.

A few years ago, the executive team at Aura looked down at this chaotic floor and made a classic, fatal error. They noticed that their agents were spending sixty percent of their time just explaining the labyrinthine, 14-step return policy to frustrated buyers. The executives thought they had found the root cause of the friction. They told themselves, “Our customers just have too many questions. We need to give them a conversational AI agent to seamlessly explain the policies.”

Enter “Omni-Agent.”

The leadership at Aura spent two million dollars working with a top-tier vendor to build a custom Large Language Model interface. It was bolted directly onto the bottom right corner of their homepage. I’ve got to admit, if you looked at the demo, it was a beautiful piece of software.

This is what we call a “Near Miss.” The human brain learns best through contrast, so we must explicitly look at what almost works to understand why it ultimately fails.

In the boardroom, Omni-Agent looked like the future of retail. A customer could type, “My jacket arrived with a broken zipper, how do I get my money back?” The system would instantly parse the intent, check the purchase history, cross-reference the 90-day return window, and type back a flawless, empathetic response in one of forty languages: “I’m so incredibly sorry to hear that your jacket arrived damaged! That isn’t the Aura standard. To process a return, simply print the attached label, find a local shipping drop-off, box the item, and once we scan it at our facility in 14 days, your refund will hit your account.”

The executives applauded. They popped champagne. They rolled it out to the site and waited for support costs to plummet.

Within a month, customer churn skyrocketed. The buyers abandoned the two-million-dollar AI and went right to Twitter to publicly scream at the brand.

Why did it fail?

It failed because Aura built a conversational overlay for a fundamentally broken “Repair Journey.” They made it easier to talk about the damaged jacket, but they didn’t do a single thing to actually fix the defective zipper that caused the ticket in the first place, nor did they fix the agonizing 14-day delay to get the money back. They built a highly articulate FAQ engine, but they ignored the physics of the customer experience.

Let’s break down the reality of what the buyer was actually dealing with. Omni-Agent could brilliantly explain the return process. But the human being still had to find a printer. The human still had to locate packing tape. The human still had to drive through traffic to a shipping center. And finally, the human still had to wait two weeks for the legacy accounting system to release their funds.

The AI didn’t eliminate the friction. It just served as a highly efficient messenger for a terrible process.

This is the exact trap we discussed in the previous section. Aura treated the lack of conversational policy explanations as the root cause of their pain. But the actual root cause was the immense physical and financial friction required to reverse a logistics error. The customers didn’t want to chat with a digital assistant. They wanted a working jacket and their money back.

When you build a support chatbot, you’re relying on the user to be the operational orchestrator. You’re relying on the customer to have the patience to navigate your broken internal silos. But in a competitive, high-stakes market, buyers don’t have the bandwidth to be your free administrative labor.

The Near Miss of Omni-Agent is playing out in Fortune 500 companies across the globe right now. We’re spending billions of dollars to give our support sites a voice, without ever stopping to ask if the software should just be doing the work quietly in the background. We’re building tools that politely deflect customers we shouldn’t have angered in the first place.

We’ve got to stop. We’ve got to strip the product away entirely and look at the bare, uncomfortable bones of the operation.

If we want to build something that actually disrupts an industry, we can’t start with the technology. We can’t look at a generative AI model and ask what it can say for us. We’ve got to look at the “Repair Journey” itself, strip away every single assumption we hold about how support gets done, and isolate the undeniable, foundational axioms of the struggle.

If you don’t understand the physics of the job, you’ll always end up building a better parrot. In the next section, we’re going to look exactly at how we strip the product away and map the atomic truth of the work.

The Hypothesis Creed

Here is the dirty secret about most enterprise AI rollouts: the leadership teams deploying them usually don’t actually know what is fundamentally broken in their operations.

They know their support centers are overwhelmed, or they know their Net Promoter Scores are tanking, but they can’t isolate the exact mechanical failure. So, they buy a generic, open-ended conversational chatbot, stick it on the website, and hope the customers will interact with it enough to magically reveal the friction. They think a blank chat window is an innovation strategy.

It isn’t. It’s an absolute abdication of leadership.

When you put a conversational AI in front of a user and simply ask, “How can I help you today?”, you’re fishing. You’re forcing the user to become the system architect. You’re exploring for a problem, and that’s the most expensive, wasteful way to use artificial intelligence.

If you want to stop building expensive digital parrots and start building computational bulldozers, you must adopt a radical new mindset. I want you to write this rule on your whiteboard right now. This is the Hypothesis Creed:

“We are testing a hypothesis. We are not exploring for a problem.”

You shouldn’t write a single line of code, and you certainly shouldn’t buy a multi-million-dollar AI wrapper, until you’ve formulated a hyper-specific hypothesis about a structural vulnerability in your business. Your AI shouldn’t be an open-ended support assistant. It must be a laser-guided missile aimed directly at a predetermined, heavily validated failure point.

Let’s look back at Aura Retail. If they had followed the Hypothesis Creed, they wouldn’t have built Omni-Agent. They wouldn’t have said, “Let’s give our customers a chatbot to explore our return policies.” They would’ve isolated the exact, painful bottleneck and said, “We hypothesize that forcing a customer to perform the physical labor of printing, packing, and shipping a defective $40 item costs us more in lifetime churn than the actual wholesale cost of the jacket itself.”

That’s a hypothesis you can test. That’s a vulnerability you can target. But to formulate a hypothesis that sharp, you can’t look at the chat interface. You’ve got to strip the product away completely.

Axiom-Driven Job Mapping (Stripping the Product)

The reason companies like Aura fall into the chatbot trap is because they’re infected with “product-centric” thinking. When they try to understand their customers’ struggles, they only look at the digital screens those customers are currently clicking.

If you sit down with an angry buyer at Aura and ask them what their goal is, a bad researcher will document: “The customer wants an easier way to navigate the support chat menu to find the refund button.”

Wrong. That isn’t their goal. That’s just a depressing description of the clunky, broken hoops they’re currently forced to jump through. If you map their job based on that description, you’ll inevitably build them another tool to “navigate chat menus.” You’ll build them a better chatbot. You’ll pave the cow path instead of building a highway.

We’ve got to kill the product-centric Job-to-be-Done. We’ve got to look at the 17 Universal Journeys—specifically the “Repair Journey” or the “Replacement Journey”—and we’ve got to strip away the screens, the keyboards, and the chat windows until we’re staring at the atomic truth of the work. We’ve got to map the axioms.

An axiom isn’t a hunch. It isn’t an industry analogy. An axiom is a fundamental reality that can’t be argued with. It’s the absolute bedrock of the problem. What is the atomic truth of processing an e-commerce return?

It isn’t a communication problem. It’s a financial and logistics problem.

Processing a return is the brutal, mathematical challenge of reversing a transaction and moving physical mass backward through a supply chain. You’re fighting the relentless, unforgiving constraints of time, shipping costs, and inventory reconciliation.

When you map the job through the lens of those axioms, everything changes. We don’t map how the customer clicks a dropdown menu or types a complaint into a chat box. We map the pure physics of the execution. We map how they define the failure. We map how they locate the proof of purchase. We map how they physically prepare the item for transport, and we map how the financial ledger is mathematically executed.

Every single phase of the job map must be grounded in these undeniable truths. The software interface doesn’t matter yet. We’re purely mapping where the physics of the operation breaks down.

When Aura actually stripped away their software and looked at the axioms of the “Repair Journey,” the reality was horrifying. They realized that a human being—no matter how articulate the AI chatbot is—shouldn’t be performing manual logistics labor for an enterprise company.

A conversation doesn’t solve a logistics reversal. A customer doesn’t need to talk to the AI about the broken zipper. They need the system to instantly, silently process the failure, calculate that shipping the item back is financially foolish, and immediately push a refund to the ledger.

When you map the axioms, you realize that the conversation shouldn’t be happening at all. You realize that the chat interface is just getting in the way of the computational bulldozer.

Once we’ve mapped the atomic truth and isolated the exact mathematical failure, we’re ready to build the actual solution. We’re ready to deploy the most powerful weapon in the architect’s arsenal: Structural Inversion.

Isolate, Validate, and Targeted Efficiency

Once we map the atomic truth of the work, we’ve got to prove that our hypothesis is actually destroying value. We’ve got to isolate the friction and validate it.

In traditional R&D, companies spend millions of dollars running massive, open-ended CSAT surveys. They ask users if they like the new website design, or if the support bot was “polite.” I’m telling you right now, that’s a phenomenal way to light capital on fire. Users don’t know how to architect a systemic solution; they only know they’re frustrated.

We don’t explore. We use Targeted Efficiency.

Because we’ve already mapped the job down to its foundational physics, we don’t have to ask broad questions. We look at the exact axiom that we hypothesize is failing. For Aura Retail, the failing axiom is the physical preparation and logistical delay required to execute a product replacement.

So, we don’t ask the customers about their feelings regarding the chatbot. We go to the operational database and we measure the exact cost of that specific failure. We isolate the pain. We measure the exact duration of the delay from the moment the customer opens the ticket to the moment the funds hit their bank. We quantify the operational overhead of paying warehouse staff to inspect broken zippers. We look at the churn rate of customers who are forced to wait 14 days for resolution.

By surveying and measuring ONLY the metrics that matter to that specific friction point, we dramatically decrease research costs. We aren’t boiling the ocean to figure out if people like artificial intelligence. We’re mathematically validating that this single, undeniable bottleneck is the exact constraint choking the business.

When Aura actually looked at the data, they didn’t find a communication problem. They found a massive efficiency delta. The theoretical minimum time to issue a digital refund using raw compute power is measured in milliseconds. The actual commercial time it took to force a customer through a physical return process was measured in weeks. That’s an unacceptable margin of error.

We isolated the pain, and we proved it existed. Now, we’re ready to build.

Building the Real Option (The Structural Inversion)

This is where the magic happens. We’ve got a heavily validated, mathematically proven problem. But we aren’t going to build a chat wrapper to apologize for it. We’re going to deploy the most aggressive maneuver in the strategic playbook: Structural Inversion.

If you want to create a true monopoly, you can’t just offer a sustaining feature update. You must radically alter the unit economics of the solution. You must invert the structure of how value is delivered.

For Aura Retail, the ultimate constraint is the physical logistics loop and the customer labor required to trigger it. So, we apply a Labor Inversion. We completely decouple the execution of the refund from human operational effort and physical shipping constraints.

We don’t give the customer an AI buddy to talk to. We remove the need for the customer to initiate a support ticket entirely.

Instead of “Omni-Agent” the chatbot, Aura should’ve built an autonomous agentic resolution engine. This is an AI that connects directly to the supply chain telemetry and the financial ledger. If the delivery carrier flags a package as heavily damaged in transit, or if a specific batch of jackets is mathematically proven to have defective zippers based on early failure rates, the AI engine acts proactively. It instantly calculates the wholesale loss, realizes a return is inefficient, and automatically triggers a replacement shipment before the customer even opens the box. The system simply sends a proactive email: “We detected an issue with your delivery. A replacement is already on the way, free of charge. Keep or discard the original.”

The AI does the heavy lifting silently. The friction is obliterated. No chat window ever opens.

The human support agents aren’t fired; they’re elevated. They’re no longer acting as human punching bags for angry customers. They’re acting as exception handlers, managing the extreme edge cases that the AI flags for complex review.

That’s a Real Option. You aren’t just buying a software update; you’re buying a fundamentally new business model. The marginal cost of resolving a logistics failure drops. The speed of execution drops from weeks to milliseconds. You’ve built a moat that your competitors, who are still busy trying to teach their support bots to say “Hello,” can’t possibly cross.

In Conclusion

I’m not going to give you a summary of what we just covered. Summaries are for people who weren’t paying attention, and if you’ve made it this far, you’re wide awake.

Here is what you possess right now that you didn’t have when you started reading.

You’ve got a completely new lens for evaluating customer experience technology. The next time a vendor walks into your boardroom and tries to sell you a sleek, conversational chatbot to “deflect tickets,” you won’t see a shiny new toy. You’ll see a trap. You’ll see a half-million-dollar parrot apologizing for a broken process.

You now possess the Socratic scalpel required to strip away the software interface and expose the raw, physical axioms of the work your customers are actually trying to accomplish. You understand that true innovation doesn’t come from exploring for problems in an open chat window. It comes from isolating a structural vulnerability, validating the friction, and applying an inversion that breaks the economics of your industry.

Customer support is an operational failure. It isn’t a conversation.

Stop talking to your customers about your broken pipes. Start architecting the bulldozer that fixes the plumbing.

Are you interested in innovation, or do your prefer to look busy and just call it innovation. I like to work with people who are serious about the subject and are willing to challenge the current paradigm. Is that you? (my availability is limited)

Book an appointment: https://pjtbd.com/book-mike

Email me: mike@pjtbd.com

Call me: +1 678-824-2789

Join the community: https://pjtbd.com/join

Follow me on 𝕏: https://x.com/mikeboysen

Articles - jtbd.one - De-Risk Your Next Big Idea

Q: Does your innovation advisor provide a 6-figure pre-analysis before delivering the 6-figure proposal?