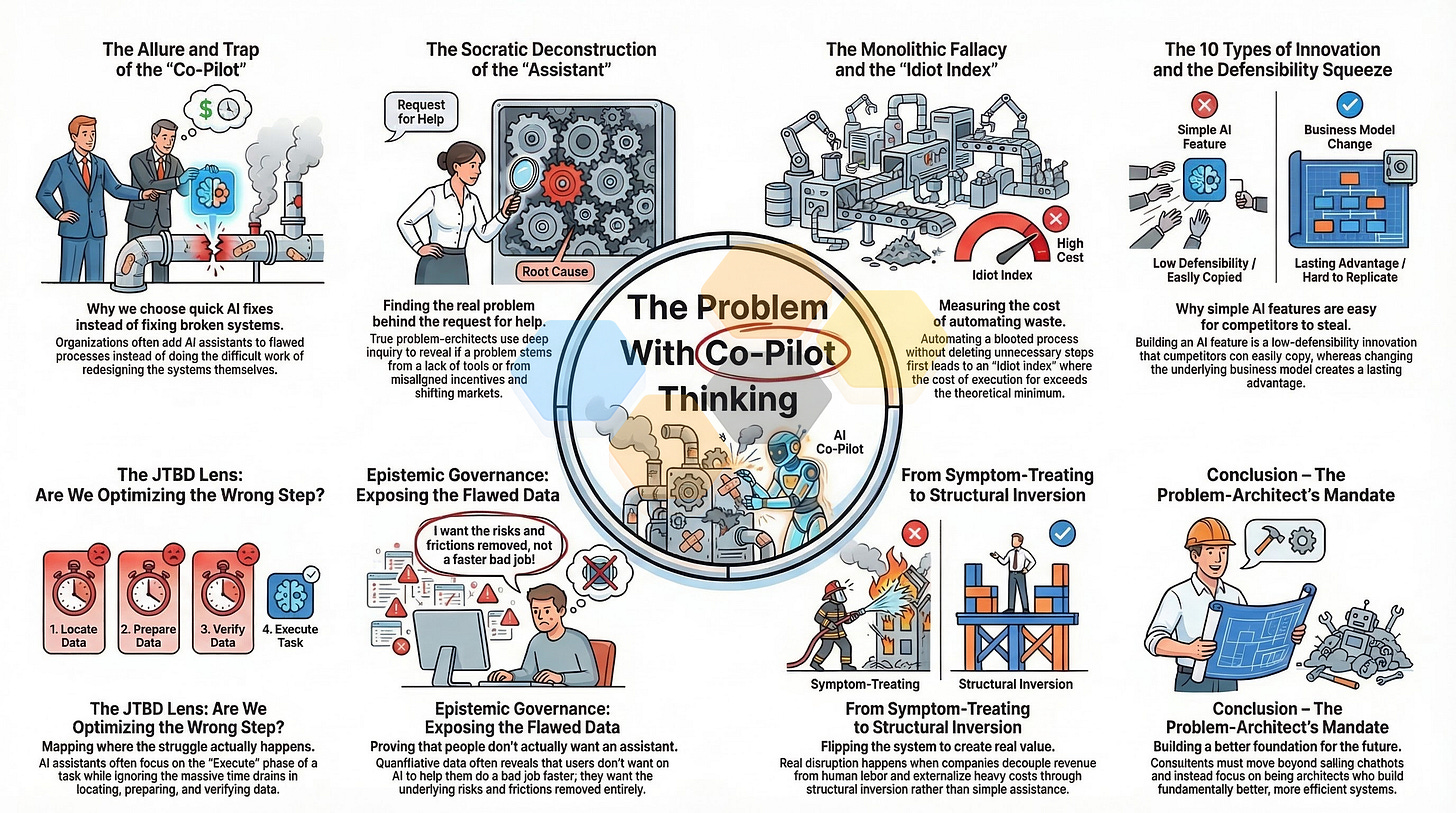

Introduction – The Allure and Trap of the “Co-Pilot”

Walk into any enterprise boardroom today, and the air is thick with a singular, overriding technological mandate: We need an AI Co-Pilot. The pitch practically writes itself. Faced with sprawling legacy systems, complex operational workflows, and employees drowning in digital friction, the modern executive is desperate for a lifeline. Enter the “Co-Pilot”—a sleek, intelligent, conversational overlay designed to sit neatly on top of the corporate chaos. It promises to read the unreadable, summarize the unmanageable, and click the un-clickable. It’s the ultimate digital concierge.

Yet, for the seasoned strategist and the problem-architect, the sudden ubiquity of the Co-Pilot raises an immediate, flashing red alarm.

“Co-Pilot thinking” has become the modern enterprise reflex to add an assistive overlay—whether digital, AI-driven, or even human—to an existing process rather than doing the difficult, unglamorous work of redesigning the flawed system itself. It’s the purest manifestation of the corporate additive bias. When faced with a problem, the default human instinct is to add a new part, a new management layer, or a new software tool to mitigate the pain. In the era of Generative AI, this additive bias has been weaponized. Instead of asking, “Why is this system so difficult to use?” or “Should this process even exist?”, organizations are asking, “How can we build an AI assistant to help our employees survive this process?”

This is the trap. The Co-Pilot is alluring precisely because it requires no structural courage. It allows an organization to maintain its legacy architecture, preserve its bloated supply chains, and ignore its misaligned incentive structures, all while giving the illusion of rapid technological advancement.

To understand why this is so dangerous, we must look at how innovation is strategically categorized and funded within large organizations. In advanced innovation governance, strategic investment pathways are typically divided into three distinct buckets:

Pathway A (Persona Expansion): A lateral move selling the existing, optimized core solution to an adjacent Job Executor down the value chain.

Pathway B (Sustaining Innovation): Fortifying the core product or defending the existing business model.

Pathway C (Disruptive Long-Term Vision): A structural inversion leap that renders the current pipeline obsolete and shifts the market dynamics entirely.

The grand illusion of Co-Pilot thinking is that it’s almost universally sold to the C-suite as a Pathway C disruption. Because it utilizes cutting-edge Large Language Models (LLMs) and advanced neural networks, executives authorize massive budgets under the belief that they’re fundamentally transforming their unit economics.

They aren’t.

A Co-Pilot is, by definition, a Pathway B Sustaining Innovation. It’s a defense mechanism for the core. It doesn’t replace the underlying system; it relies upon it. It doesn’t invert the labor model to drive the marginal cost of delivery to zero; it merely attempts to make the existing human operational expense (OPEX) marginally faster. By slapping a highly intelligent chatbot on top of archaic Enterprise Resource Planning (ERP) software, you haven’t disrupted the ERP market—you’ve simply made your own bad software slightly more tolerable for your employees.

This monolithic fallacy—treating a sustaining feature update as if it were a structural disruption—obscures the true cost of Co-Pilot thinking. Traditional business cases demand ROI predictions for products that don’t even exist yet. This forces teams to invent numbers, leading to the funding of safe, incremental ideas and the death of disruptive ones.

True problem-architects recognize that the highest-leverage activity in business isn’t building tools to navigate friction; it’s having the courage to pause, deconstruct the demand, and delete the friction entirely. When an enterprise rushes to build a Co-Pilot, they’re bypassing the crucial Option to Explore phase of innovation (where we ask if the problem is even real) and the Option to Validate phase (where we quantify the struggle). They leap straight into the Option to Execute, pouring capital into a Minimum Viable Product without proving the underlying logic.

The firefighter is rewarded for speed and action. When a customer complains that a reporting suite takes 40 clicks to navigate, the firefighter immediately builds a voice-activated Co-Pilot to perform those 40 clicks automatically. The problem-architect, however, is rewarded for clarity. The architect asks why 40 clicks are required, traces the complexity back to an un-validated product roadmap, and deletes the reporting suite entirely in favor of a structurally simplified, automated data push.

As we’ll explore in the following sections, Co-Pilot thinking is the enemy of the architect. It institutionalizes waste. It cements poor design. And worst of all, it gives organizations a false sense of security, convincing them they’re innovating when, in reality, they’re just helping their employees execute the wrong things faster.

The Systematic Deconstruction of the “Assistant”

Innovation rarely fails because of a lack of engineering talent; it fails because teams build brilliant solutions for entirely the wrong problems. The modern enterprise is hopelessly addicted to “solution-jumping,” treating surface-level symptoms as if they’re the root cause. This is exactly where Co-Pilot thinking thrives—in the muddy waters between a symptom and a cure.

To understand the peril of this mindset, we can look at a classic consulting parable we’ll call “Project Apex.” Imagine a high-energy VP of Sales at a mid-stage SaaS company slamming their fist on a boardroom table. “Our sales data is a black box,” they declare. “My reps are flying blind. We need an AI Co-Pilot integrated into our CRM to summarize rep activity, analyze real-time pipeline velocity, and recommend next best actions. What’s the solution?”

The product and engineering teams, eager to deploy the latest tech, nod enthusiastically. They spend six months and $500,000 building the ultimate AI assistant. Six weeks after launch, daily active users total a fraction of the sales floor. More importantly, the reps’ underlying behavior hasn’t changed an inch, and revenue remains stagnant.

The post-mortem reveals a brutal truth: The problem was never “visibility” or a lack of AI-driven insights. The company’s compensation plan rewarded any closed deal, regardless of size or strategic value. The reps weren’t flying blind at all; they knew exactly where the complex, high-value leads were hiding. They were intentionally ignoring them to close easy deals and secure their bonuses. Project Apex was a half-million-dollar AI solution to a symptom. The real problem was an unaligned incentive structure. If the AI Co-Pilot had actually succeeded in its mandate, it wouldn’t have accelerated company growth—it’d have just helped reps identify and close the wrong deals even faster.

Why do brilliant leaders and consultants fall into the Project Apex trap so consistently? It comes down to cognitive frameworks. When faced with a problem, most corporate strategy relies heavily on “reasoning by analogy.” This approach looks at what competitors are doing and attempts to do it slightly better. If a rival deploys an AI assistant, reasoning by analogy dictates we must build one too. It assumes the current baseline is fundamentally correct and only requires incremental optimization.

This is the “Cook” mindset. A cook follows a recipe—an analogy for what’s already been proven to work. But they’re permanently constrained by existing templates.

True problem-architects, however, operate as “Chefs.” They use First Principles Thinking. They forcefully reject analogical reasoning, deconstructing a complex problem down to its most basic, undeniable axioms, and rebuilding the solution entirely from the ground up. The Chef doesn’t ask, “How do we build a better Co-Pilot to help our reps write emails?” The Chef asks, “What is the fundamental, indivisible economic truth of acquiring a customer in this market?”

To shift a client from the Cook to the Chef mentality, consultants should wield the Socratic Method—not as a tool for argument, but as a mental scalpel for collaborative discovery. Before a single line of Co-Pilot code is written, the demand must be deconstructed using the 5 Categories of Socratic Inquiry:

Clarification: Ensuring the core assertion is understood. “When we say reps take ‘too much time’ drafting emails, what exactly do we mean? What’s the baseline?”

Challenge Assumptions (The Inversion): Hunting for foundational beliefs. “What if the emails our reps are currently writing are perfectly crafted, but they’re choosing the wrong channels, or the buyers simply aren’t reading them?”

Evidence & Reasoning: Forcing an empirical defense. “What observable data leads us to conclude that drafting speed is the primary blocker to revenue?”

Alternative Viewpoints: Expanding the problem space. “How would the CFO view this investment? What would our top-performing reps say is actually stopping them?”

Implications & Consequences: Testing downstream effects. “If we build this Co-Pilot perfectly, and reps can send 10,000 personalized emails a day instead of 100, what else must be fundamentally true for our pipeline to accelerate?”

By drilling down past the assumptions, the consultant hits bedrock. When we analyze this friction through the lens of the 17 Universal Customer Journeys, a stark reality emerges. The leadership team assumes the friction lies in the reps’ Utilization Journey (the day-to-day use of the CRM and email platform). But the Socratic deconstruction reveals that the real friction lies in the buyer’s Selection Journey. The target market has fundamentally shifted; enterprise buyers are no longer responding to cold outbound emails, no matter how personalized they are. They’re making purchasing decisions in dark social channels, peer networks, and closed communities.

The underlying issue isn’t email velocity; it’s a flawed go-to-market motion and deteriorating product-market fit.

If you deploy an AI Co-Pilot to solve this, you haven’t solved the problem. You’ve simply built a highly efficient machine that spans the globe, delivering the wrong message to the wrong persona in the wrong channel at an unprecedented scale. You’ve automated your own irrelevance.

The Monolithic Fallacy and the “Idiot Index” of Co-Pilots

Let’s pull back the curtain on how these assistive overlays actually get funded. The problem isn’t just that Co-Pilots are bad solutions; it’s that they’re wrapped in business cases that violate the most fundamental laws of process engineering. When an enterprise falls for the Monolithic Fallacy, they pour millions of dollars into developing an AI assistant, completely oblivious to the fact that they’re pouring concrete over a broken foundation.

To understand exactly how Co-Pilot thinking destroys value, we have to evaluate it against Elon Musk’s rigorous 5-Step Execution Engine. Forged during the brutal “production hell” of scaling the Tesla Model 3, this algorithm is a ruthless heuristic designed to bust bureaucracy and eliminate waste. Crucially, the algorithm dictates that the steps must be executed sequentially. Failing to follow the sequence results in the catastrophic optimization of waste.

Here’s the sequence:

Make the requirements less dumb: Attach a human name to every requirement. Treat them as inherently flawed hypotheses.

Delete any part or process you can: If you aren’t forced to add back at least 10% of what you deleted, you didn’t delete enough.

Simplify and optimize: Only do this after the ruthless deletion phase.

Accelerate cycle time: Shave milliseconds off the optimized, simplified process.

Automate: Introduce robotics or software automation strictly as the final step.

Notice where “automate” sits. It’s the absolute final step. Yet, Co-Pilot thinking inherently flips this entire algorithm upside down. A Co-Pilot is, at its core, a form of automation—an intelligent agent designed to execute tasks on behalf of a human. When a consulting team prescribes an AI assistant to fix a clunky software suite, they’re jumping straight to Step 5.

A Brief Pause: Why am I obsessing over the a process that the richest man in the world uses, who has built at least 6 companies with multi-billion dollar valuations? I just told you why. If you can point to someone else who eats their own dog food, and does so successfully, I will happily devour their methods as well. Now, back to the show!

They’re completely ignoring Step 2: Delete any part or process you can. The corporate default is additive. We assume that if something is hard, we must add a tool to make it easier. Co-Pilots refuse to delete. They thrive on the accumulation of defensive, “just-in-case” engineering. Why simplify an archaic, 15-step approval process when you can just build a Co-Pilot to auto-fill the 15 forms for you?

This leads to a catastrophic violation of Step 3 (Simplify and optimize). As the internal Tesla maxim dictates, the most common error made by capable engineers is spending immense intellectual capital optimizing a component or process that shouldn’t exist in the first place. Early in the Model 3 ramp-up, Tesla tried to build an “alien dreadnought”—a fully automated factory devoid of humans. By attempting to automate complex, un-optimized tasks (like manipulating flexible fiberglass mats), the automation actively bottlenecked production. They had to rip the robots off the line.

When you automate a bloated digital process with an LLM, you haven’t fixed the process. If you’re digging your own grave, adding a robotic Co-Pilot just helps you dig it faster.

We can mathematically quantify this dysfunction using another of Musk’s favored economic heuristics: the “Idiot Index.”

In hardware manufacturing, the Idiot Index is a diagnostic ratio calculated by dividing the total cost of a finished component by the fundamental cost of its basic raw materials. If a finished aluminum widget costs $1,000, but the raw block of aluminum required to machine it costs only $100, the index is 10:1. The discrepancy is deemed “idiotic.” It proves the high cost isn’t dictated by the laws of physics, but by flawed design, over-engineering, or supply chain exploitation.

We can apply the exact same First Principles Calculator to digital operations. The “raw material” of a digital task is the theoretical floor of its execution—typically a fraction of a cent for an API call, a database query, or a latency metric. The “finished cost” is the current commercial cost, including the human labor required to navigate the bad software.

Let’s say an internal compliance check takes a human analyst two hours to complete due to a horrific UI and fragmented databases, costing the company $100 in labor per check. The theoretical digital floor to cross-reference two data fields is $0.01. That’s an Idiot Index of 10,000:1.

The firefighter’s solution? Build a $2 million GenAI Co-Pilot to help the analyst read the fragmented databases faster, bringing the human time down to 30 minutes ($25). They celebrate a 75% reduction in labor cost. But the problem-architect looks at the math and winces. The underlying database architecture is still broken. The theoretical floor is still $0.01. By adding a highly complex, computationally expensive AI layer on top, they haven’t solved the Idiot Index; they’ve just institutionalized it, adding millions in CapEx to support an infrastructure that shouldn’t exist.

We see this play out disastrously in the B2C world all the time.

Consider a massive retail brand whose mobile app has become a bloated maze of promotional banners, hidden menus, and conflicting navigation logic. Customers are abandoning their carts in droves. Instead of auditing the core customer experience, the brand’s leadership falls for the Co-Pilot pitch. They spend millions deploying a “Smart Shopping Assistant”—a generative AI chatbot integrated right into the app’s home screen. The promise is that users can simply type, “I need a blue winter coat for a ski trip,” and the Co-Pilot will bypass the terrible UI and serve up the perfect product.

The brand has fundamentally misdiagnosed the 17 Universal Customer Journeys. They thought they were improving the Selection Journey (choosing a product). But the real friction was buried in the Purchase Journey (the transactional logistics) and the Configuration Journey (setting up the app profile and payment details).

When a user tries to buy the blue winter coat, the Co-Pilot still has to route them back into the app’s broken checkout flow. Even worse, the brand forced a conversational interface onto an interaction where users don’t actually want to converse. Customers buying socks or a jacket on a mobile phone don’t want a chatty companion; they want a one-click checkout. They want seamless, invisible utility.

By refusing to execute Step 2 (delete the bad UI) and jumping straight to Step 5 (automate the search via chatbot), the brand compounded their friction. The app became heavier, slower, and more confusing. The Co-Pilot didn’t act as a concierge; it acted as a massive, expensive band-aid over a fatal UX wound. It proved that if you layer intelligence over incompetence, the incompetence ultimately wins.

The 10 Types of Innovation and the Defensibility Squeeze

Even if a company manages to build a Co-Pilot that isn’t a complete financial boondoggle, they immediately run headfirst into a second, arguably more fatal wall: defensibility. If you’ve optimized a symptom instead of curing the root cause, what exactly have you built? To answer this, we have to look through the lens of Doblin’s 10 Types of Innovation.

The Doblin framework is a strategic bedrock because it forces organizations to realize that innovation isn’t just “inventing a new gadget.” Most companies fixate exclusively on adding features, which is the easiest type of innovation for competitors to copy. The 10 Types are separated into three distinct categories:

1. Configuration (The Business Backend): How you organize and make money. Highly defensible.

Profit Model: Finding a fresh way to convert value into cash.

Network: Creating value through partnerships.

Structure: Organizing company assets and talent in unique ways.

Process: Signature operational methods that create superior efficiency.

2. Offering (The Core Product): What you actually sell. Highly visible, easily copied.

Product Performance: Distinguishing features, functionality, and quality.

Product System: Complementary products bundled into an ecosystem.

3. Experience (The Customer Interface): How you interact with the market.

Service: Support and enhancements that amplify the product’s value.

Channel: How offerings are delivered to the market.

Brand: Representing the business to drive choice.

Customer Engagement: Fostering deep, meaningful interactions.

Most companies focus almost exclusively on the middle layer—the Offering. Specifically, they obsess over “Product Performance.” When an enterprise builds a Co-Pilot, it sits squarely in this exact bucket. A Co-Pilot is just a feature. It’s a “Product Performance” enhancement.

Here’s why this is a strategic nightmare: Product Performance is the most visible, most seductive, and paradoxically, the least defensible type of innovation. It’s highly susceptible to the “Defensibility Squeeze.” When you build your moat out of a conversational interface powered by a commercially available LLM, you don’t actually have a moat. You have an easily replicable feature that your biggest, best-funded competitor will clone by Friday afternoon.

If your core competitive advantage is that you’ve added an OpenAI wrapper to your dashboard to help users summarize PDFs, you haven’t fundamentally altered the market. You’re simply renting intelligence from a massive AI vendor to prop up your Offering layer, while ignoring the layers that actually generate enterprise value.

True, defensible monopolies aren’t built by obsessing over the core product’s features. They’re built by innovating across the unsexy backend (Configuration) and the emotional front-end (Experience). These layers are notoriously difficult to copy because they require deep structural changes, complex partnerships, and a radical rethinking of how the business makes money.

If Co-Pilot thinking traps organizations in the shallow end of the Offering category, how do problem-architects build real moats within Pathway B (Sustaining Innovations)? The strategic fix requires shifting the client’s focus away from “features” and toward “configuration.”

Let’s look at an example in the B2B professional services space.

Imagine a legacy legal-tech firm whose software is designed to help massive law firms track billable hours and assign complex billing codes. The attorneys loathe the software. It takes them hours at the end of the month to locate the correct codes and prepare their invoices. The leadership team decides they need an innovation play to prevent churn. The firefighter’s pitch? Let’s build a GenAI Billing Co-Pilot! The lawyer can just type, “I spent two hours reviewing the Smith contract,” and the Co-Pilot will automatically suggest the correct billing codes.

It sounds like a win. But it’s just a Product Performance tweak. It’s highly copyable. Any other legal-tech firm can build a billing chatbot.

The problem-architect applies the Defensibility Squeeze. They look at Doblin’s framework and realize the friction isn’t in the Offering; it’s derived from the firm’s Profit Model (a Configuration layer innovation). The entire reason the complex software exists is because the legal industry relies on the archaic, highly debated model of the “billable hour.”

The true sustaining innovation isn’t building a chatbot to help lawyers log hours faster; it’s abandoning the billable hour entirely. The architect advises the legal-tech firm to build software that facilitates value-based, flat-fee subscription models for corporate clients. Once the firm shifts to flat-fee retainers, the need to meticulously track hours and assign complex billing codes vanishes.

The friction wasn’t automated; it was deleted. The Co-Pilot became completely unnecessary because the Profit Model innovation fundamentally altered the way value was exchanged. And unlike a chatbot, overhauling a firm’s pricing structure and underlying business model is incredibly difficult for a competitor to copy quickly.

We see this same Defensibility Squeeze in the B2C sector.

Take a consumer hardware brand selling smart home appliances. They notice a massive spike in customer service calls from users struggling with the Repair Journey (diagnosing and fixing broken washing machines). The default Co-Pilot thinking dictates they should build an AI troubleshooting assistant into their app. The user chats with the AI, the AI asks a series of diagnostic questions, and eventually tells the user which part to order.

Again, this is a highly visible, weak Product Performance feature. Competitors can build the exact same troubleshooting bot.

The problem-architect shifts the focus from the Offering to the Experience—specifically, Service and Process innovations. Instead of forcing the user to chat with a bot, the brand redesigns the hardware to include cheap, embedded IoT sensors that monitor the motor’s health. When the sensor detects an impending failure, it bypasses the user entirely. It communicates directly with the company’s supply chain (a Process innovation) and auto-ships the replacement part to the user’s door before the machine even breaks, accompanied by a simple QR code linking to a 30-second replacement video.

The user never had to open the app. They never had to chat with an AI. They never entered the Repair Journey at all. The brand built a fortress around their customer experience by innovating their backend logistics and proactive service model.

When a client demands a Co-Pilot, the consultant’s job is to wield the Doblin framework like a shield. They must squeeze the demand out of the overcrowded “Offering” category and force it into the “Configuration” or “Experience” categories. Because if you’re just building features to help people survive your bad design, you aren’t building a business. You’re just building a waiting room for disruption.

The JTBD Lens: Are We Optimizing the Wrong Step?

If you’ve successfully squeezed your client’s demand out of the “Offering” category, the next hurdle is figuring out exactly where the actual friction lives. We have to map the user’s struggle. This is where Jobs-to-be-Done (JTBD) theory becomes a consultant’s most potent diagnostic tool. After all, people don’t buy enterprise software (or Co-Pilots); they hire them to get a specific job done.

To break down a job objectively, problem-architects use a 9-step chronological job map. Every human task, no matter how complex, flows through this sequence:

Define: Planning or assessing upfront.

Locate: Gathering items, data, or resources.

Prepare: Integrating inputs or environments.

Confirm: Verifying or deciding before execution.

Execute: The primary, core action.

Monitor: Tracking the execution.

Resolve: Troubleshooting deviations or issues.

Modify: Making adjustments based on monitoring.

Conclude: Wrapping up the process.

Here’s the core issue: Co-Pilot thinking is morbidly obsessed with the Execute phase.

When an executive says, “We need an AI to draft quarterly performance reports,” they’re staring exclusively at the Execute step. But what if drafting the report only takes 10 minutes, while the Locate step (hunting down fragmented data across five different legacy systems) takes four hours? What if the Prepare step (cleaning and formatting that data) takes another three hours? And what if the Confirm step (verifying the AI didn’t hallucinate and invent false revenue numbers) takes yet another two hours?

A Co-Pilot optimizes the 10-minute execution phase while completely ignoring the massive temporal drain surrounding it. It’s a localized optimization that fails to accelerate the global process.

We can zoom out even further to look at the macro level using the 17 Universal Customer Journeys. Whether you’re in B2B SaaS or B2C retail, your customers are traveling through journeys like Selection, Purchase, Configuration, Integration, Learning, Utilization, Maintenance, and Repair.

When pitching a Co-Pilot, leadership teams overwhelmingly assume their friction lives in the Utilization Journey—the day-to-day use of the software. They think, “Our users are struggling to utilize the tool; let’s give ‘em a conversational assistant to help them navigate it.” But the real, mathematically validated pain almost always lives elsewhere. The friction is usually buried deep in the Integration Journey (connecting the tool to legacy systems), the Configuration Journey (the grueling initial setup), or the Resolve Journey (troubleshooting when the platform inevitably breaks).

How do we prove this to a client who is stubbornly fixated on an AI assistant? We translate their qualitative complaints into rigorous, MECE-compliant (Mutually Exclusive, Collectively Exhaustive) Customer Success Statements (CSS).

If you look closely at standard Co-Pilot pitches, you’ll notice they rely heavily on forbidden, subjective verbs. The pitch promises to “empower reps,” “facilitate ease of use,” or “manage workflows.” To a problem-architect, these words are meaningless. You can’t mathematically measure “empowerment.” It’s marketing fluff designed to hide a lack of empirical data.

A valid CSS strips away the fluff. It follows a strict, solution-agnostic syntax: [Direction] + [Metric] + [Object of Control] + [Contextual Clarifier]. It must start with either Minimize (for reducing friction and cost) or Increase (for augmenting positive value and certainty). It cannot contain adverbs like “quickly” or “efficiently,” nor can it describe a software interface (like “click” or “log in”).

Let’s contrast a firefighter’s goal with an architect’s CSS in a B2B enterprise data migration scenario.

The firefighter writes a goal: “Quickly empower analysts to input legacy data into the new CRM.” The proposed solution? An AI Co-Pilot that reads old spreadsheets and automatically fills in the CRM fields. The executives love it. It sounds fast and futuristic.

The problem-architect maps the Integration Journey instead. They look at the Confirm and Resolve steps of the job map, realizing the true cost isn’t the speed of data entry; it’s the cost of auditing bad data. They write a strict CSS: “Minimize the likelihood of incorrect data input during CRM integration.”

Now, let’s evaluate the Co-Pilot against that CSS. A conversational AI overlay doesn’t minimize the likelihood of incorrect data input. In fact, due to the inherent, probabilistic nature of LLMs, the Co-Pilot might actually increase that likelihood by hallucinating entries or misinterpreting spreadsheet columns. If your Co-Pilot auto-fills 10,000 fields, but the analyst has to manually review all 10,000 fields to ensure the AI didn’t invent a client’s phone number, you haven’t saved time. You’ve simply shifted the human burden from “data entry” to “AI babysitting.”

If your rigorously defined CSS is about minimizing the likelihood of data errors during integration, the solution isn’t a chatbot. The solution is building native, hard-coded API hooks between the two databases that transfer the data deterministically, with zero probabilistic guessing. You don’t need a conversational interface; you need invisible, structural integration.

Co-Pilots are undeniably shiny. They feel like the future. But when you apply the JTBD lens—when you map the user’s struggle across the 9 chronological steps and the 17 Universal Journeys—you’ll almost always find that the Co-Pilot is optimizing the wrong step, in the wrong journey, using the wrong metrics. It’s a multi-million-dollar hammer searching desperately for a nail, completely ignoring the fact that the entire house is sinking into the mud.

Epistemic Governance: Exposing the Flawed Data Behind Co-Pilots

If you’ve managed to map the user’s struggle, identify the correct chronological step, and frame a perfectly MECE-compliant Customer Success Statement, you’re halfway to stopping a disastrous Co-Pilot build. But the executive sponsor still sits across the table, armed with a slide deck claiming that 85% of their users “want an AI assistant.” How do you dismantle that momentum? You can’t just argue strategy; you have to rely on objective data and Epistemic Governance.

Most enterprises suffer from a crippling epistemological traffic jam. They consistently confuse epistemic uncertainty (we simply lack the data to know the answer) with aleatoric uncertainty (the answer is inherently random or unpredictable). Because of this confusion, they treat all data as equal, happily funneling massive capital into multi-million dollar Co-Pilot builds based on what we call “State 1 Hunches.”

In a rigorous Three-State Validation Matrix, every strategic input—whether it’s a problem, a feature request, or a market shift—must be categorized by its statistical confidence level.

State 1 is the Hunch (Low Confidence). It’s a raw hypothesis. It possesses no empirical primary data. When the VP of Product says, “Our competitors are launching AI agents; we need one to stay relevant,” that’s a State 1 Hunch. Its nature is purely qualitative heuristic, and it should only ever be scored conceptually on a Bivariate Risk/Impact matrix. A hunch grants you the right to run an experiment; it never grants you the right to write production code.

State 2 is the Assumption (Medium Confidence). This is Bayesian updating. We have some proxy data—maybe a few customer interviews or an industry analyst report suggesting that “conversational UI is the future.” It’s stronger than a hunch, but it’s still proxy data. You hold it for primary testing.

State 3 is the Validated Need (High Confidence). This state relies entirely on primary, quantitative survey data gathered directly from the verified Job Executor. And it’s here, in State 3, that the business cases for Co-Pilots usually commit their gravest error.

When legacy organizations attempt to validate a new feature, they blast out a survey asking users to rate how “important” an AI Co-Pilot would be on a scale of 1 to 5, and how “satisfied” they are with the current process. Then, the product team averages those Likert scores and performs basic arithmetic to build their case.

This is a severe methodology violation. Likert scales yield ordinal data. The psychological distance between a “3” and a “4” is not mathematically identical to the distance between a “1” and a “2.” Averaging them creates a fictitious mean that completely distorts the true distribution of customer pain. You end up forecasting a 5-year ROI on a product using a mathematical ghost. Furthermore, self-reported importance is highly susceptible to inflation bias. If you ask a frustrated employee if they want a magical AI assistant to help them do their job, they’ll always circle “5”.

Here’s how you prove a Co-Pilot won’t move the needle: You stop asking people if they want an assistant, and you start measuring Objective Need.

First, we calculate Urgency. Instead of looking at average scores, we isolate the percentage of the population experiencing acute pain. We look purely at the gap between those who rate a task as highly important and those who are actually satisfied with it. A significant gap represents a valid, unfulfilled market expectation.

But Urgency isn’t enough. We must also calculate Impact, or Derived Importance. To eliminate self-report bias, we bypass what the user claims is important. Instead, we look at the correlation between their satisfaction with a specific step and their overall satisfaction with the broader job they are trying to get done.

If improving a specific step strongly correlates with overall job success, fixing that step drives systemic satisfaction. If there’s no correlation, the step is practically irrelevant, regardless of what the user claimed verbally in the survey.

Let’s look at a B2B Procurement example. The procurement team is struggling with the Integration Journey of onboarding new vendors. The legacy software is terrible. The firefighter pitches an “AI Vendor Co-Pilot” to help managers parse the onboarding documents. In the survey, the stated importance for “Faster document parsing” is incredibly high (let’s say 90% top-box).

But when the problem-architect runs the numbers on derived importance, a shocking truth emerges. The correlation between “satisfaction with parsing speed” and “overall job satisfaction” is incredibly weak. It doesn’t move the needle. Why? Because going faster isn’t the real goal.

The architect then looks at a different CSS: “Minimize the risk of vendor compliance failure during onboarding.” The correlation for this statement, however, is overwhelmingly strong.

This data completely eviscerates the Co-Pilot business case. The math proves that users don’t want an AI assistant to help them parse documents faster; they want the underlying liability of compliance failure neutralized. A Co-Pilot—which can hallucinate or miss critical legal clauses—actually exacerbates the risk of compliance failure. The only way to solve a high-impact compliance liability is to bypass the human-document interface entirely and build a structural, API-driven network that verifies vendor compliance programmatically at the source.

By deploying rigorous Epistemic Governance, the consultant shifts the conversation from subjective opinions to mathematical certainties. They expose the fact that the client was about to fund a State 1 Hunch using flawed ordinal averages. The data ultimately proves what the architect knew all along: customers rarely want an assistant to help them endure a miserable, high-risk job. They want the job fundamentally altered or eradicated entirely.

From Symptom-Treating to Structural Inversion

Let’s be clear: Pathway B (Sustaining Innovation) isn’t inherently evil. An enterprise needs to defend its core product to survive. But the most dangerous mistake a consultant can make is treating Pathway B as a permanent resting place. If you’ve used First Principles thinking, Job Mapping, and Epistemic Governance to properly deconstruct a client’s demand, you’ll inevitably realize that treating symptoms with assistive overlays isn’t enough. The Co-Pilot is merely a bridge. The true destination is Pathway C: Disruptive Long-Term Vision.

To move a client from symptom-treating to true value creation, the problem-architect must become a Structural Inversion Strategist. This means completely bypassing incremental feature updates to radically alter the unit economics of the solution. If a Co-Pilot optimizes the existing process, Structural Inversion explodes the process entirely.

There are three primary levers of Structural Inversion a consultant can deploy to replace Co-Pilot thinking:

1. The Labor Inversion Leap

The fatal flaw of the Co-Pilot is that it leaves the human in the loop as the primary bottleneck and cost center. A Co-Pilot assists human OPEX (operational expense). It takes a Level 3 or Level 4 knowledge worker—billing at $150 an hour—and gives them a slightly faster digital typewriter. The revenue of the company remains linearly coupled to the headcount of the employees.

True Labor Inversion decouples revenue from human OPEX. It shifts the fundamental unit of value delivery from human labor to scalable agentic compute, driving the marginal cost of delivery to near zero.

Imagine a B2B cybersecurity firm. Their current model involves highly paid analysts manually reviewing security logs to generate threat reports for clients. The firefighter’s Co-Pilot pitch is to build a “Security Chatbot” that helps the analysts write the reports 20% faster. The human is still doing the work; they’re just getting a tiny productivity bump.

The Labor Inversion strategy deletes the human from the execution phase entirely. Instead of a Co-Pilot, the firm builds a swarm of autonomous AI agents that monitor logs, identify threats, execute containment protocols, and generate the report with zero human intervention. The role of the human shifts from execution to orchestration and exception handling. By inverting the labor model, the firm can scale from serving 100 clients to 10,000 clients without hiring a single new analyst. The Co-Pilot made them slightly faster; Labor Inversion made them infinitely scalable.

2. The CapEx Inversion Leap

If you’re dealing with physical infrastructure, Co-Pilot thinking often manifests as expensive software built to manage terrible physical assets. CapEx (Capital Expenditure) Inversion forces a company to externalize the hardware to the market and internalize the intelligence to the platform.

A classic example is the evolution of the taxi industry. If a legacy taxi company hired a consultant to innovate, the Co-Pilot approach might be to build “predictive routing software” to help their dispatchers manage the company-owned fleet of cars more efficiently. They’re still stuck owning the depreciating assets (the cars) and paying the dispatchers.

Uber executed a CapEx Inversion. They realized there was massive “Orphaned Capacity” sitting in driveways around the world. They externalized the heavy CapEx (making the drivers own the cars) and internalized the intelligence (the matchmaking algorithm). They didn’t build a Co-Pilot for dispatchers; they inverted the entire capital structure of transportation.

When your client asks for an AI assistant to manage their expensive, cumbersome physical assets, you must ask: “How can we externalize these atoms to the market and own only the orchestration software?”

3. The Network Inversion Leap

Most legacy businesses operate on a linear pipeline model: the company creates value, pushes it down a supply chain, and a customer consumes it. When friction arises in a linear pipeline, the company builds a Co-Pilot to help push the value down the pipe faster.

Network Inversion transitions the business from a linear pipeline to a decentralized platform. It shifts the burden of value creation from the company to the users themselves.

Consider an educational tech company that produces coding courses. Their instructional designers are overwhelmed trying to keep up with the fast-changing tech landscape. The Co-Pilot pitch? An “AI Curriculum Assistant” to help the internal designers write course material faster.

The Network Inversion strategy abandons the linear pipeline entirely. Instead of the company acting as the sole creator of value, they build a decentralized marketplace where expert developers around the world can create, upload, and sell their own micro-courses to students, with the platform taking a 20% cut. The company no longer needs a Co-Pilot to write faster; they’ve inverted the network so that the market creates the value for them.

By utilizing these three inversion levers, problem-architects ensure their clients aren’t just funding glorified digital assistants. They’re designing defensible monopolies that fundamentally rewrite the economic rules of their industry.

Conclusion – The Problem-Architect’s Mandate

The modern enterprise is at a crossroads. The explosive rise of Generative AI has presented organizations with a tantalizing, dangerous shortcut. It’s incredibly easy to succumb to the allure of the “Co-Pilot”—to look at a messy, bloated, analog organization and promise that a conversational AI overlay will magically fix everything. It’s easy because it requires no structural confrontation. It offends no one’s departmental turf. It validates the “firefighter” mentality that rewards speed and visible activity over deep, strategic clarity.

But as we’ve established, the consultant who sells a Co-Pilot to solve a systemic operational flaw is engaging in intellectual malpractice.

Your ultimate mandate isn’t to build tools; it’s to be a Problem-Architect. To institutionalize this mindset and safeguard against the monolithic fallacy, you must stress-test the customer promise against reality before a single line of code is written.

The journey from a vague corporate complaint to a disruptive market shift isn’t a straight line. It requires moving systematically through a rigorous lattice of logic. You must Deconstruct the analogy (Socratic Scalpel), Calculate the bloat (Idiot Index), Reframe the moat (Doblin 10 Types), Map the struggle (JTBD), Validate the data (Epistemic Governance), and finally, Invert the structure (Structural Inversion).

If you deploy an overlay that leaves the human as the primary bottleneck, if you optimize a symptom without fixing the underlying liability, and if you rely on hunches rather than objective, correlated data, you aren’t disrupting anything. You’re just building a Pathway B sustaining feature and disguising it as the future.

Yes, it’s significantly harder to sell a client on a structural tear-down than it is to sell them a shiny new AI chatbot. The firefighter will always get the initial applause. But when the smoke clears, the Co-Pilot will inevitably break under the weight of the underlying friction.

Be the architect. Don’t build them a better compass to navigate a burning building. Build them a better building.

If you find my writing thought-provoking, please give it a thumbs up and/or share it. If you think I might be interesting to work with, here’s my contact information (my availability is limited):

Book an appointment: https://pjtbd.com/book-mike

Email me: mike@pjtbd.com

Call me: +1 678-824-2789

Join the community: https://pjtbd.com/join

Follow me on 𝕏: https://x.com/mikeboysen

Articles - jtbd.one - De-Risk Your Next Big Idea

Q: Does your innovation advisor provide a 6-figure pre-analysis before delivering the 6-figure proposal?