When you’re chasing innovation, failure is rarely the result of a deficit in engineering talent or a lack of financial resources. More often than not, products fail because highly capable teams build brilliant, flawless solutions for completely the wrong problems. The Jobs-to-be-Done (JTBD) framework was designed to prevent exactly this by shifting the focus from the product to the underlying human struggle. Yet, despite its widespread adoption, JTBD frequently falls short in complex B2B ecosystems.

The reason is simple: the framework’s only as good as the inputs you feed into it, and human strategists are the primary bottleneck. Before we can map a customer’s journey or engineer a solution, we’ve got to confront the psychological and organizational flaws that corrupt the starting point of strategy development. If the initial problem definition isn’t based on fundamental truths, the rest of the innovation process simply geometricizes and scales the error.

The Human Bottleneck in Innovation Strategy

The Illusion of Alignment

The modern enterprise is fundamentally addicted to “solution-jumping.” When a market pressure arises, teams instinctively rush to define the output rather than deconstruct the demand. They treat surface-level symptoms—commonly referred to as “pain points”—as root causes.

Let’s consider a common B2B scenario: A VP of Operations at an industrial equipment company observes that field technicians are taking too long to complete on-site machine repairs, severely impacting margins. The immediate mandate handed down to the innovation and product teams is, “Our techs are struggling with complex machinery. We need an Augmented Reality (AR) headset to overlay 3D repair manuals directly in their field of vision.” The team rapidly aligns around this directive. Budgets are approved, AR vendors are selected, and millions in capital’re deployed. This’s the illusion of alignment. The organization’s perfectly aligned around delivering a symptom-level output.

When the AR headsets are deployed to the field, repair times actually increase, and within six weeks, the expensive headsets are abandoned in the back of service trucks. Why? Because the problem was never a lack of technical knowledge or visibility. The underlying reality was that the central warehouse routinely dispatched technicians to job sites without the correct replacement parts. The techs knew perfectly well how to fix the machines; they simply didn’t have the physical materials required to complete the job on the first visit. The AR headset was a costly, highly engineered band-aid. The actual “job” was redesigning predictive inventory staging and fixing the dispatch logistics.

When organizations jump to solutions, they’re solving symptoms. And solving a symptom almost always leaves the core job entirely unaddressed.

The Cognitive Biases at Play

If you want to correctly identify the real job, you’ve got to understand that your own brain’s wired to sabotage the process. Human strategists corrupt JTBD inputs due to three pervasive cognitive biases:

Action Bias: Corporate environments reward forward motion. Writing code, launching marketing campaigns, and shipping features feel like progress. Conversely, pausing to rigorously interrogate a mandate feels like stalling. Teams default to building because execution is visible and measurable, whereas deconstruction is abstract.

Authority Bias: In most organizations, the “Highest Paid Person’s Opinion” (HiPPO) dictates the product roadmap. When a brilliant or senior leader outlines a requirement, teams rarely pressure-test the assumption. In reality, requirements originating from highly intelligent leaders are the most dangerous, because their authority creates a psychological shield that prevents colleagues from challenging the underlying logic.

Confirmation Bias: Even when teams attempt to use JTBD, they often conduct customer interviews with a pre-decided solution in mind. They don’t listen to discover the user’s struggle; they listen to find data points that validate the feature they already want to build.

The “Cook vs. Chef” Dilemma

The inability to identify the real job is compounded by how we process information. Most corporate strategy and product design relies heavily on Reasoning by Analogy. That’s the mindset of the “Cook.” A cook works by following an existing recipe. They look at what competitors’re doing, benchmark industry standards, and attempt to do it slightly better.

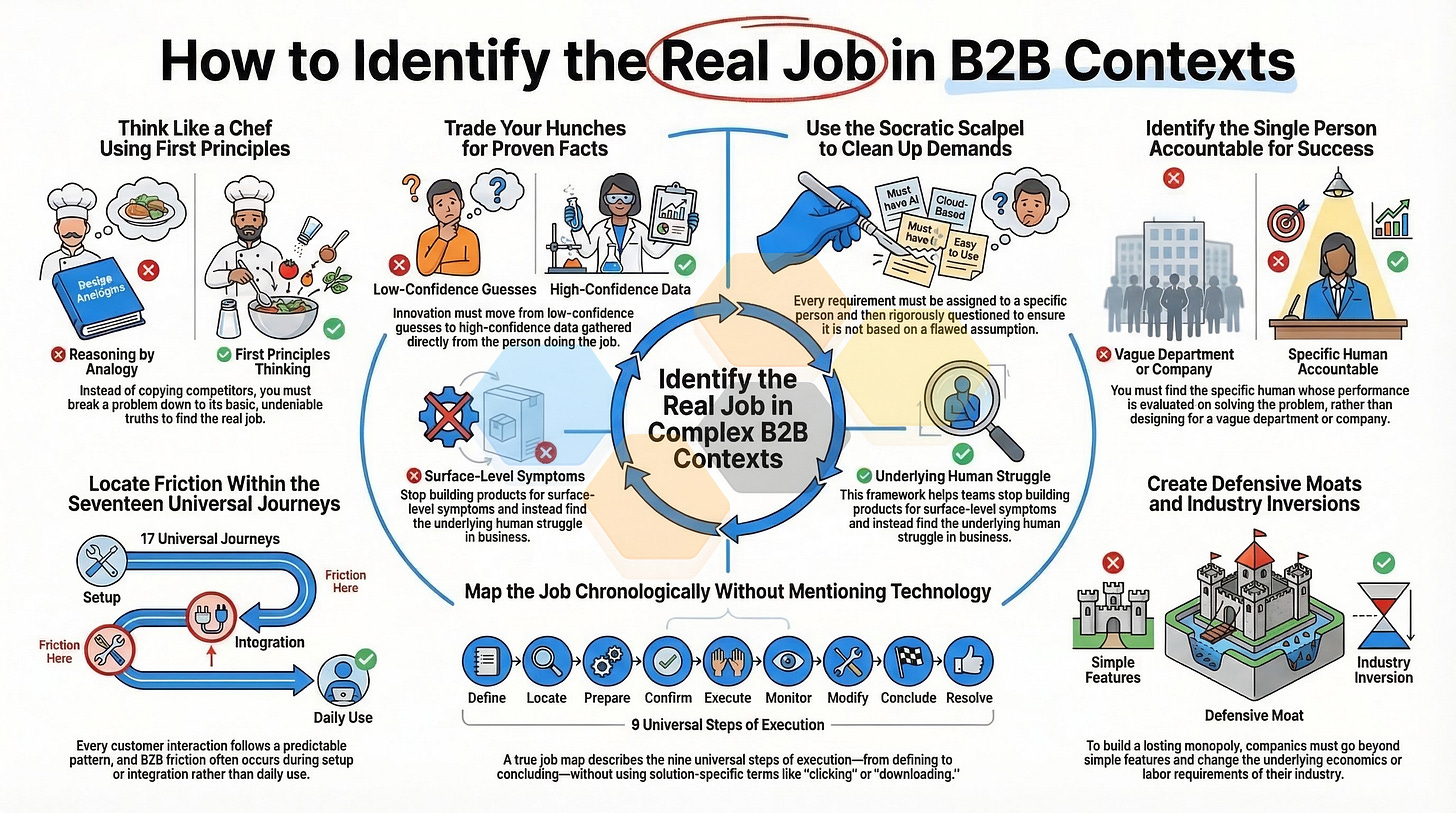

To find the true JTBD, strategists have got to adopt the mindset of the “Chef,” utilizing First Principles Thinking. A Chef deeply understands the raw materials and uses them to invent entirely new constructs from the ground up. First Principles Thinking requires forcefully rejecting industry analogies and smashing a complex problem down to its most basic, undeniable physical, digital, or economic truths (axioms).

The Architect vs. The Firefighter

Identifying the real job requires a fundamental shift in organizational reward systems. Today, most companies reward the “Firefighter,” who’s praised for speed, action, and heroics—extinguishing one symptom only to rush off to the next.

Innovation requires the “Problem-Architect.” The architect is rewarded for clarity, discipline, and systemic thinking. The highest-leverage activity any strategist or product leader can perform in business is having the courage to pause, refuse the initial solution-driven brief, and meticulously deconstruct the demand. Before we can map the job, we’ve got to first learn how to strip away our assumptions and isolate the undeniable truth of the customer’s struggle.

The Epistemological Crisis: Hunches vs. Axioms

If the human strategist is the primary bottleneck in identifying the true Job-to-be-Done, the mechanism they fail by is almost entirely epistemological. Epistemology’s the study of knowledge—how we know what we know, and how we differentiate justified belief from mere opinion.

Before we can accurately map a customer’s job, we’ve got to understand the nature of the uncertainty surrounding that job. This requires drawing a hard line between two distinct concepts: aleatoric uncertainty (inherent randomness) and epistemic uncertainty (a lack of data). The exact pain points of a B2B supply chain manager represent epistemic uncertainty—the truth is out there in the world, the enterprise simply hasn’t done the rigorous work required to uncover it.

The Monolithic Fallacy

This misunderstanding of uncertainty culminates in what’s known as the “Monolithic Fallacy.” Traditional business cases demand a highly structured, monolithic investment: a team hass got to present a five-year ROI forecast and projected gross margins for a product that doesn’t yet exist, serving a market that hasn’t been mathematically quantified.

Because the team lacks empirical data, they’re forced to invent numbers. This creates a toxic, systemic bias within the innovation pipeline. It actively encourages the funding of safe, incremental ideas—where analogies make financial projections look plausible—and it guarantees the death of truly disruptive ideas, which inherently lack historical data to prop up a five-year forecast. When you force a team to predict the ROI of an unvalidated JTBD, you aren’t engaging in strategy; you’re mandating corporate theater.

The Three States of Validation

To dismantle the Monolithic Fallacy and protect the JTBD framework from garbage inputs, organizations have got to consolidate their evaluation criteria into a singular, rigorous pipeline: The Three-State Validation Matrix.

State 1: The Hunch (Low Confidence / High Uncertainty) A hunch is a raw hypothesis or internal company dogma possessing zero empirical primary data. In a B2B context, “Our clients need an AI-driven predictive maintenance tool” is a State 1 hunch. Teams have got to evaluate the magnitude of business failure if this hunch’s assumed true but’s actually false. You can’t ever allocate engineering capital or attempt to build a job map based on a State 1 Hunch.

State 2: The Assumption (Medium Confidence / Bayesian Updating) An assumption is a hunch that’s been subjected to secondary evidence establishing a prior probability by using market reports, competitor data, and analogous industry trends. Assumptions that reach a strong evidence threshold have merely earned the right to proceed to State 3.

State 3: The Validated Need (High Confidence / Empirical Proof)

This relies entirely on primary, quantitative, or behavioral data gathered directly from the verified Job Executor. Only when a customer’s struggle has transitioned into State 3 can we confidently deploy execution capital.

The Real Options Framework

How does an enterprise operationalize this transition from Hunch to Empirical Truth? By reframing R&D funding through the lens of Real Options Analysis (ROA). An R&D budget is a premium paid to purchase an option for a future strategic decision. Instead of funding a monolithic business case, the enterprise funds three staged bets to aggressively buy down epistemic uncertainty:

Phase 1: The Option to Explore: A microscopic investment to deploy First Principles Thinking and deconstruct the problem to ask, “Is this a real, valuable, unsolved problem?”

Phase 2: The Option to Validate: A moderate investment made entirely into data gathering and behavioral observation to find the true friction points.

Phase 3: The Option to Build & Test (Execute): Targeted capital is deployed to build a Minimum Viable Prototype (MVPr). Only when the MVPr proves the unit economics does the organization exercise the ultimate option: full-scale capital deployment.

By establishing strict epistemological boundaries and funding innovation via Real Options, we ensure we’re solving undeniable axioms. With the starting point secured, we can turn our attention to the dangers of applying execution frameworks too early.

The Danger of a Flawed Starting Point: Amplifying the Error

Even when organizations adopt a staged, real-options approach to innovation, they face a critical vulnerability: the eagerness to begin mapping. The Jobs-to-be-Done framework is celebrated for its rigor, breaking down workflows into discrete, measurable steps. However, this same rigor makes a flawed starting point incredibly destructive.

Frameworks are multipliers. If your starting premise is an empirical truth, the Job Map scales clarity. If your starting premise is a symptom-level hunch, the Job Map geometrically expands the strategic error. Applying an execution-level framework to a State 1 Hunch doesn’t de-risk the innovation; it provides a highly detailed architectural blueprint of a hallucination.

Requirement Ownership: The First Line of Defense

In complex B2B environments, innovation initiatives rarely begin as blank slates. They begin as inherited requirements passed down from leadership. To sanitize these inputs, we’ve got to look to the aerospace and advanced manufacturing sectors—specifically, the five-step engineering philosophy popularized by Elon Musk. The unbending first rule of this methodology is: Make the requirements less dumb. A requirement can’t belong to a faceless entity like “Legal” or “The Executive Team.” It’s got to be attached to a specific, named human being. By forcing the mandate to carry a name, accountability’s established. You can sit down, debate the underlying logic, and pressure-test the assumption.

The Socratic Scalpel: A 4-Phase Deconstruction

Once you’ve assigned human ownership, the strategist has got to confront the stakeholder and deconstruct the mandate. The modern strategist has got to use the Socratic method not as an argumentative weapon, but as a collaborative scalpel.

Phase 1: Preparation (Framing the Demand)

Never begin by questioning the stakeholder’s intelligence. Build psychological safety by framing the exercise around a shared risk: wasted capital and time. On a whiteboard, map the stakeholder’s demands into two stark columns: What We Know (observable, empirical facts) versus What We Believe (assumptions, analogies, and hunches).

Phase 2: Deconstruction (The 5 Socratic Plays)

Deploy five specific lines of inquiry to force an empirical defense:

Clarification: Ensure the core assertion is defined before challenging it.

Challenge Assumptions (The Inversion): Hunt for the foundational beliefs and invert them. (e.g., “What if buyers actually want a slower checkout to ensure compliance?”)

Seek Evidence: Force an empirical defense, pushing from State 1 to State 3.

Alternative Viewpoints: Expand the problem space by introducing other actors. (e.g., “Who actually benefits from the current manual process remaining broken?”)

Implications: Test the downstream effects of the proposed solution.

Phase 3: Validation (Drilling to Bedrock) Drill down vertically until you hit a foundational truth that can’t be argued with—a First Principle. A convention is: “Our enterprise clients need automated reporting.” A First Principle is: “A rational corporate actor will prioritize actions that minimize their quantified financial liability.”

Phase 4: Synthesis (The New Problem Statement)

Don’t leave a power vacuum. Replace the old, flawed brief with a solution-agnostic problem statement that isolates the true struggle.

Old Flawed Brief: “We need to build a self-serve vendor portal to reduce procurement bottlenecks.”

New Validated Brief: “Our current approval routing penalizes mid-level managers for taking on risk, causing them to intentionally delay vendor onboarding. We’ve got to re-architect the risk-approval framework.”

By executing this Socratic Scalpel, the corporate theater of solution-jumping is replaced by a physics-based reality. With a sanitized problem statement in hand, we’ve got to navigate the most perilous trap in B2B innovation: identifying exactly who is trying to get the job done, and defining exactly what that job is.

Deconstructing the Ecosystem: Identifying the True Job Executor

In the Jobs-to-be-Done framework, a job without a clearly defined executor is merely a floating concept. If you don’t isolate the precise individual trying to execute the job, any subsequent mapping will become a tangled, incoherent mess of conflicting needs.

The B2B Complexity Trap

In a direct-to-consumer (B2C) environment, the ecosystem is usually linear. In B2B environments, the enterprise ecosystem is heavily fragmented. A company buys nothing; a coalition of distinct human actors does. This ecosystem consists of an alphabet soup of conflicting roles:

The Economic Buyer: The executive holding the budget.

The Champion/Influencer: The mid-level leader advocating for the solution.

The End-User: The frontline employee whose daily workflow is altered by the tool.

The “B2B Complexity Trap” occurs when an innovation team attempts to design a monolithic product that blends the jobs of all these stakeholders into a single interface. A product designed to simultaneously satisfy the CFO’s need for strict compliance and the Data Clerk’s need for rapid data entry inevitably fails at both.

The “Big Hire” vs. The “Little Hire”

To untangle the B2B ecosystem, we’ve got to differentiate between two distinct types of adoption:

The Big Hire represents the macro-decision to acquire a platform. The executive “hires” the software to give them peace of mind, strategic visibility, or cost reduction at a systemic level.

The Little Hire represents the micro-decision made by the frontline employee to actually log in and use the software. The frontline worker “hires” the software to minimize the clicks required to finish a task or avoid getting reprimanded.

If a product focuses entirely on the Big Hire, it’ll close the initial enterprise contract, but frontline workers will shadow-IT their way around the system. When renewal time arrives, the executive sees zero adoption data and churns the contract. Innovation requires designing targeted, distinct solutions for both without conflating their jobs.

The Framework in Action: Specifying the Who and the What

Because of this tension, my framework dictates absolute precision. You shouldn’t ever define a target persona as a demographic, a company, or a department. When an innovation team designs for “The Logistics Department,” they’re designing by committee, leading to feature-creep.

Let’s walk through the exact, step-by-step thinking process the framework uses to force clarity on who the correct executor is, and what job we’re actually going to study.

Step 1: The Socratic Scalpel (Finding the ‘Why’)

We start with a flawed mandate: “We need to build an AI routing app for our commercial delivery fleet.” We don’t accept this. We ask why until we hit bedrock. Through deconstruction, we uncover the empirical truth: “We’re wasting fuel and missing delivery windows because we can’t adapt to unpredictable traffic or warehouse load times mid-shift.”

Step 2: The Accountability Filter (Finding the ‘Who’)

To pinpoint the exact human executor, the framework applies the Accountability Filter: Whose personal, professional performance is directly evaluated on solving this specific friction? Look at the ecosystem. You’ve got the Delivery Driver (The Little Hire) and the Fleet Dispatch Manager (The Big Hire). Who gets fired if fuel costs skyrocket and delivery windows are consistently missed? It isn’t the delivery driver—they’re just following the GPS interface they’re handed; they don’t control the fleet’s overarching efficiency. It’s the Fleet Dispatch Manager who holds the systemic liability for the fleet’s daily success and adaptability. If you don’t use this filter, you’ll end up building an app for the driver that doesn’t actually solve the routing logic. Therefore, the singular human role we’re building for is the Fleet Dispatch Manager.

Step 3: The Syntactic Formulation (Finding the ‘What’) Now that we’ve isolated the Dispatch Manager, what’s the job we’re studying? It isn’t “Use an AI routing app.” That’s an analogy - a solution in disguise. The framework demands a strict, solution-agnostic syntax: [Action Verb] + [Object] + [Contextual Clarifier]. We’ve got to strip away the technology entirely. What are they fundamentally trying to do?

Action Verb: Adjust

Object: active fleet routes

Contextual Clarifier: during mid-shift disruptions

The true job we’ll study is: “Adjust active fleet routes during mid-shift disruptions.”

By systematically applying the Accountability Filter to find the who, and the Syntactic Formulation to find the what, we’ve stripped away the AI app analogy. To find the exact location of the market opportunity, we’ve got to plot this executor against the finite chronology of human interaction: The 17 Universal Journeys.

The 17 Universal Journeys: Locating the Friction

A common pitfall in enterprise strategy is the reliance on vague descriptions of customer friction like, “Our onboarding process is terrible,” or “The user experience’s clunky.” To engineer a precise solution, the problem-architect’s got to recognize that customer experiences fall into finite, predictable, chronological patterns.

By categorizing user friction into a rigid taxonomy, strategists can isolate the exact phase of the lifecycle that requires innovation through the framework of the 17 Universal Customer Journeys.

The Chronology of Customer Experience

Every interaction a human being has with a product or platform can be mapped to one (or more) of 17 distinct journeys. Each demands a vastly different structural intervention:

1. Acquisition & Setup Journeys

The Selection Journey: Identifying and choosing the most suitable solution.

The Purchase Journey: Transactional action and logistics of acquisition.

The Delivery Journey: Logistics of how a product reaches the customer.

The Installation Journey: Technical process of preparing the solution.

The Configuration Journey: Initial setup and tuning to operationalize it.

The Integration Journey: Connecting the new solution with legacy systems.

The Learning Journey: Understanding how to extract value from the solution.

2. Ongoing Execution Journeys

The Customization Journey: Tailoring the ongoing solution to specific preferences.

The Utilization Journey: The core, day-to-day execution and use.

3. Upkeep & Maintenance Journeys

The Maintenance Journey: Ongoing care and updates required to prevent failure.

The Repair Journey: Diagnostic and resolution process when the solution breaks.

The Cleaning Journey: Process of sanitizing or clearing out waste/data-debt.

The Storage Journey: Safeguarding, archiving, or pausing the solution.

The Relocation Journey: Moving the solution across environments (e.g., migrations).

4. End-of-Life Journeys

The Upgrade Journey: Enhancing the solution to a higher tier of capability.

The Replacement Journey: Substituting a failed solution with a direct alternative.

The Disposal Journey: End-of-life process, including data deletion or offboarding.

B2B Context Application: The Graveyard of Integration

In consumer markets (B2C), innovation capital is almost exclusively poured into the Utilization Journey. Because many product leaders cut their teeth in B2C, they assume that if they build a beautiful, consumer-grade utilization interface, B2B enterprise adoption will follow.

In B2B environments, software frequently fails long before the frontline user reaches the Utilization Journey. The heavy lifting resides in the Integration, Configuration, and Learning journeys. If the Integration Journey connects a new CRM to a twenty-year-old legacy ERP database and takes six months of grueling IT labor, the project is dead on arrival.

Defensible B2B monopolies aren’t built by merely optimizing utilization; they’re built by drastically lowering the friction of Integration and Configuration. By establishing the true Job Executor and isolating their friction to a specific Universal Journey, the problem space is locked. However, to execute this without falling back into solution-bias, we’ve got to abandon feature-driven roadmaps and adhere to a strict chronological deconstruction of human execution.

Mapping the Real Job: The 9-Step Chronology

When asked to map a customer’s process, product managers intuitively map the customer’s interaction with the current product. They map screens, clicks, forms, and workflows. This isn’t a Job Map; it’s a process map of a legacy solution.

The Core Rule of Mapping is absolute: A Job Map has got to be completely, flawlessly solution-agnostic. It’s got to describe what the executor is trying to accomplish, not how they’re currently doing it.

The 9 Universal Steps of Execution

Whether the Job Executor is a cardiac surgeon or an Accounts Payable Clerk, the fundamental sequence of human execution unfolds across nine finite stages:

Define: Assess the requirements upfront.

Locate: Gather, access, or retrieve necessary inputs or resources.

Prepare: Organize or integrate inputs to facilitate execution.

Confirm: Verify readiness or make a final go/no-go decision.

Execute: The primary, core action to achieve the job’s overarching goal.

Monitor: Ensure the process is proceeding successfully and safely.

Resolve: Troubleshoot, fix, or restore the system if deviations occur.

Modify: Make adjustments to the execution environment to optimize.

Conclude: Final actions taken to wrap up and store outputs.

A Job Map built upon these nine steps has got to adhere to the MECE principle: it’s got to be Mutually Exclusive (no conceptual overlap) and Collectively Exhaustive (covering the entire scope without gaps).

The JTBD Verb Lexicon: Engineering Customer Success Statements

A chronological map tells you the sequence of events, but to measure success, we’ve got to generate Customer Success Statements (CSS) for every step on the map. The phrasing of a CSS is governed by a strict syntactic formula:

[Direction of Improvement] + [Metric] + [Object of Control]+ [Contextual Clarifier]

Strategists have got to rely on a highly restricted JTBD Verb Lexicon:

The Direction of Improvement: Every CSS has got to begin with Minimize (for reducing friction) or Increase (for augmenting positive value).

The Vague Blacklist: Manage, handle, perform, do, facilitate, enable, empower, ensure, optimize. (You can’t mathematically measure “empowerment”).

The Subjective Blacklist: Feel, look, seem, appear. (These are emotional states, not functional B2B job metrics).

The Solution-Specific Blacklist: Click, download, input, submit, log in, export, print. (These describe interactions with a specific technological interface, blinding you to innovation).

With the qualitative assumptions finally stripped away, the enterprise is prepared to transition from mapping to validation, transforming these carefully crafted metrics into undeniable empirical truths.

The Quantitative Mirage: How a Bad Map Bankrupts the Innovation Pipeline

The entire purpose of the Real Options innovation method is to ruthlessly de-risk your strategy. It’s built to make capital-efficient investment decisions, buying information in stages so you don’t blow millions on a guess. But here’s the dirty secret: if you don’t define the proper Job Executor and the correct Job Map upfront, the whole system breaks. You aren’t de-risking anything. You’ve just built a highly rigorous, mathematically precise waste-generation machine.

When you get the who and the what wrong, your strategy goes off the rails. And the scariest part? It won’t look like it’s failing until it’s too late. It’ll look like you’re succeeding with flying colors.

The Quantitative Mirage (The Option to Validate)

Let’s say your team skipped the Socratic Scalpel (or trusted an expert consultant). You picked the wrong Job Executor—targeting the Delivery Driver instead of the Fleet Dispatch Manager. Worse, you mapped a wildly abstract, subjective job. Instead of mapping the functional reality of “adjusting active routes during mid-shift disruptions,” your team mapped a fluffy, emotional concept like “enhancing driver empowerment.”

Or maybe you make an even deadlier mistake: you invent a completely abstract persona. You decide your executor is the “B2B Omni-Channel Growth Synergist” or “The Marketing Department.” That isn’t a real human; that’s a buzzword or a committee. Because you targeted a ghost, you end up mapping a Frankenstein job like “streamlining cross-functional data synthesis.” This fake job smashes the CFO’s compliance needs, the IT admin’s database integration, and the marketer’s campaign launch into one massive, bloated workflow.

Ignorant of this fatal error, you proudly move into Phase 2: The Option to Validate.

You take your carefully crafted - yet completely abstract and conflated - Customer Success Statements (CSS) and survey the market. Now, you might be at a lean startup that usually skips extensive surveys due to the expense, but let’s assume you’ve got the budget and do it by the book. You feed the responses into the Unified Validation Engine. Because you’re using strict JTBD mathematics, you don’t fall for the trap of ordinal averaging or the flawed 2I-S formula. You calculate the Top-Box Gap (G) to find market urgency, and measure the Derived Importance (r) using Pearson correlations against overall satisfaction.

The engine spits out your Prioritized Outcomes Heatmap, and the Objective Need Scores look incredible. You’ve got massive, glaring red targets indicating exactly what you should build next. The data screams “green light!”

But it’s a complete, terrifying mirage.

Why? Because of inflation bias and context collapse. If you ask a Delivery Driver to rate “Increase my feeling of control over daily routes,” they will smash that 5/5 button. Or, if you survey your made-up “Growth Synergist” about “minimizing the time it takes to synthesize cross-functional data,” the CFO, the IT admin, and the marketer are all going to hit 5/5 for completely different reasons. The CFO wants audit trails, the marketer wants leads.

The data looks pristine. It tells you you’ve found a goldmine. But “driver empowerment” doesn’t hold the economic liability for the fleet’s fuel costs. And your “cross-functional synthesis” isn’t a real workflow. The data is completely disconnected from the actual business driver. Your resulting heatmap is a disjointed, incohesive set of underserved emotional outcomes and conflated tasks that are almost impossible to aggregate into a cohesive business solution.

You’ve successfully used elite statistical rigor to validate a hallucination. You think you’ve de-risked the investment, but you’re actually just gaining extreme, misplaced confidence in the wrong direction.

The MVPr Collision (The Option to Build)

High on that false positive from your survey data, leadership eagerly exercises the Option to Build. You move into Phase 3 and design your Minimum Viable Prototype (MVPr).

You don’t write a single line of code. You do exactly what the framework tells you to do: you build a manual, “Wizard of Oz” concierge service to test the “driver empowerment” or “cross-functional synthesis” mechanic in the wild. Your team manually curates a “driver support feed” and pushes “route autonomy” options directly to the drivers’ phones to see if they finish their shifts faster. Or you manually compile massive cross-departmental data dossiers and drop them on a marketer’s desk.

And then, you hit a brick wall.

The behavior doesn’t change. The drivers ignore the autonomy features because they’re just trying to survive traffic, and the Dispatcher—the actual economic buyer who cares about the bottom line—never even sees the intervention. Meanwhile, that marketer looks at your massive cross-functional dossier, gets overwhelmed by IT and finance data they don’t understand, and throws it in the trash. The solution mechanic is wildly, spectacularly invalidated by reality.

You handed a brilliant solution for a fake, abstract job to the wrong person.

The Cost of the Illusion

Now, you might be thinking, “Well, the MVPr failed, but at least we didn’t build the full software MVP! The circuit breaker worked!”

Sure, failing at the MVPr stage is cheaper than launching a fully scaled B2B platform. But let’s be real - it’s still a massive, unforgivable waste of capital. You’ve blown through your Phase 1 exploration time, burned your Phase 2 survey budget, exhausted your customers with irrelevant questionnaires, and wasted weeks running a concierge test that didn’t ever stand a chance. This is even worse using more traditional waterfall research where you pay six-figures (in advance) to determine if there’s even a problem (this happens frequently).

Worse, you’ve burned your stakeholders’ goodwill. When the MVPr fails this catastrophically, executives don’t usually blame the starting premise; they blame the framework. They’ll say JTBD doesn’t work, and they’ll go right back to “solution-jumping” and building whatever the loudest executive wants.

The entire point of this methodology is to buy information logically so you can deploy capital efficiently. When you rush the starting point - when you fail to isolate the singular human executor and map their true, solution-agnostic struggle - you bypass the very de-risking mechanisms you tried to put in place.

If your map is abstract and misaligned, the mathematics won’t save you. They’ll just help you crash with absolute precision.

From Principle to Priority: Synthesizing the Solution

Through the rigorous application of First Principles thinking, the Socratic Scalpel, the 17 Universal Journeys, and behavioral validation via the MVPr, the problem-architect is mathematically and behaviorally de-risked the innovation pipeline. But what exactly are we scaling?

If an organization takes a validated customer job and simply builds a standard software application to solve it, they remain highly vulnerable. To transform a validated job into a defensible monopoly, the enterprise has got to shift from principle to priority.

The Trap of “Product Performance”

When asked to innovate, 95% of product teams default to Product Performance—adding new features or functionality. Product Performance’s the weakest and most easily copied form of innovation. To create a monopoly, problem-architects have got to surround their core offering with “Configuration Moats” and “Experience Moats” (based on Doblin’s 10 Types of Innovation).

Configuration Moats (The Backend): These innovations define how you organize your assets and generate revenue. (e.g., Profit Models converting value into cash differently, leveraging Partner Networks, or streamlining internal Structure & Process).

Experience Moats (The Front-End): These define how you interact with and retain the market, ensuring the “Little Hire” becomes fiercely loyal to your platform. (e.g., delivering instant utility through un-traditional Channels, or building mission-driven Brand Engagement).

The Structural Inversion Leap

To truly dominate a B2B sector, you’ve got to orchestrate a disruptive leap by deploying a Structural Inversion, turning the legacy economics of the industry upside down:

CapEx Inversion (The Physical Asset Leap): Externalizing the physical “atoms” to the market while internalizing the “intelligence” (the orchestration software), eliminating the need to acquire heavy Capital Expenditures.

Labor Inversion (The AI Leap): Decoupling revenue from human OPEX by shifting the execution to scalable AI agentic compute, driving the marginal cost of delivery to near zero.

Network Inversion (The Platform Leap): Shifting a linear pipeline where a company creates value for a consumer into a decentralized model where users create value for other users (e.g., pooled risk data).

Synthesizing the Real Options

The architect’s final duty is to consume all the validated data and present leadership with three distinct, non-overlapping strategic pathways—framed as Real Options:

Pathway A: Persona Expansion (The Lateral Move). Selling the newly optimized, validated core solution to an adjacent Job Executor down the value chain.

Pathway B: Sustaining Innovation (The Core Defense). Fortifying the core product by building out the Profit Model and Experience moats to protect existing market share from churn.

Pathway C: Disruptive Vision (The Inversion Leap). Integrating the Structural Inversion output to render the current market pipeline completely obsolete, shifting the industry dynamics entirely.

By presenting these as Real Options, leadership isn’t being asked to blindly guess on a five-year forecast; they’re choosing a validated pathway based on their current risk appetite.

Conclusion: The Architect vs. The Firefighter

When organizations rely on “solution-jumping” and analogical reasoning, they reward the corporate Firefighter who frantically builds unvalidated features to extinguish surface-level symptoms. To achieve what others deem impossible, organizations have got to embrace the mindset of the Problem-Architect.

By adopting First Principles thinking, aggressively interrogating demands, locating friction within finite journeys, and deploying behavioral validation, the enterprise neutralizes the human bottleneck. This is how you stop reasoning by analogy, fold time, and build products that categorically redefine the market.

If you find my writing thought-provoking, please give it a thumbs up and/or share it. If you think I might be interesting to work with, here’s my contact information (my availability is limited):

Book an appointment: https://pjtbd.com/book-mike

Email me: mike@pjtbd.com

Call me: +1 678-824-2789

Join the community: https://pjtbd.com/join

Follow me on 𝕏: https://x.com/mikeboysen

Articles - jtbd.one - De-Risk Your Next Big Idea

Q: Does your innovation advisor provide a 6-figure pre-analysis before delivering the 6-figure proposal?